Cisco Designing Cisco Security Infrastructure 300-745 SDSI Exam Questions

A product manager is focused on maintaining the security integrity of a microservice-based application as new features are developed and integrated. To ensure that known software vulnerabilities are not introduced into the product, it is crucial to implement a robust application security technique. The technique must be applied during the build phase of the software development lifecycle, which allows the team to proactively identify and address vulnerability risks before deployment. Which application security technique must be applied to accomplish the goal?

Answer : B

In a microservices-based architecture, applications are typically packaged into containers to ensure consistency across different environments. According to the Designing Cisco Security Infrastructure (SDSI) objectives, securing the software development lifecycle (SDLC) requires integrating security checks as far 'left' as possible. Container scanning is the specific technique used during the build phase to inspect container images for known software vulnerabilities (CVEs) within the bundled libraries, binaries, and dependencies.

When a developer initiates a build, the container scanning tool cross-references the layers of the image against vulnerability databases. If a high-risk vulnerability is detected in a base image or a third-party library, the build can be automatically failed, preventing the vulnerable code from ever reaching the registry or production environment. This directly addresses the product manager's goal of ensuring known vulnerabilities are not introduced. While Secret Detection (Option A) is vital for finding leaked API keys or passwords, and Infrastructure as Code (IaC) scanning (Option C) ensures the environment configuration is secure, neither specifically targets the software vulnerabilities within the application package itself. Similarly, Open API specification analysis (Option D) focuses on the contract and security of the interface rather than the underlying software vulnerabilities. By implementing container scanning, organizations align with Cisco's DevSecOps framework, which emphasizes automated, policy-driven security within the CI/CD pipeline to maintain the integrity of cloud-native applications.

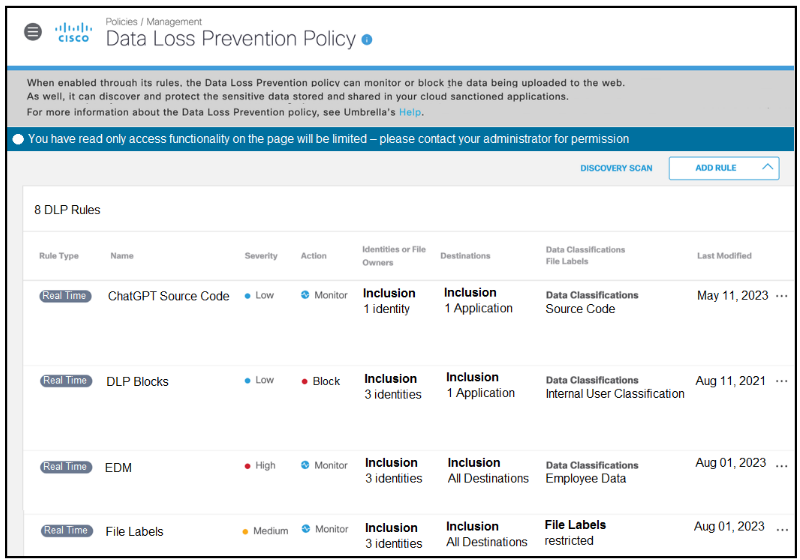

Refer to the exhibit.

A software developer noticed that the application source code had been found on the internet. To avoid such an incident from happening again, the developer applied a DLP policy to prevent from uploading source code into generative AI tool like ChatGPT. When testing the policy, the developer noticed that it is still possible for the source code to be uploaded. Which action must the developer take to prevent this issue?

Answer : D

In the provided exhibit of the Cisco Data Loss Prevention (DLP) Policy interface (likely within Cisco Umbrella or a similar cloud security gateway), the reason for the policy's failure to stop the upload is clearly visible in the 'Action' column. The rule named 'ChatGPT Source Code' is currently configured with the action set to Monitor.

According to the Cisco SDSI v1.0 objectives regarding application and data security, the Monitor action is designed for visibility and auditing. It allows the traffic to pass through while generating a log entry for security analysts to review. This is often used during an initial 'discovery' phase to understand how data is moving without disrupting business processes. However, to fulfill the requirement of preventing the unauthorized upload of sensitive data---such as application source code---the policy must be enforcement-centric.

By selecting Option D, the developer changes the action from 'Monitor' to Block. In 'Block' mode, the DLP engine will actively intercept the web request to ChatGPT, inspect the content for 'Source Code' classifications, and drop the connection if a match is found, thereby preventing the data from leaving the corporate environment. While moving rules (Option B) can resolve conflicts if a 'Block' rule is superseded by an 'Allow' rule higher in the list, the primary issue here is the non-restrictive action of the specific rule itself. Modifying data classifications (Option C) is unnecessary if the engine is already correctly identifying the source code, as evidenced by the successful monitoring logs mentioned in the scenario. Changing the action to Block is the definitive step to ensure data integrity and prevent intellectual property theft.

A company hosted multiple applications in the Kubernetes environment, using the naming app01, app02, and so on. An app01 user could access app02 data because no security measures are implemented. The administrator decided to place each application within a separate namespace and ensure that the namespaces are completely isolated and cannot communicate with each other. Which solution must be used to accomplish the task?

Answer : C

In a Kubernetes environment, Namespaces provide a logical partition for resources but do not, by default, provide network isolation. To prevent 'app01' from communicating with 'app02,' a NetworkPolicy must be implemented. NetworkPolicies act as the Layer 3/4 distributed firewall for the cluster, allowing administrators to define explicit rules for ingress and egress traffic between pods and namespaces.

To achieve complete isolation, a common design pattern is to implement a 'deny-all' default policy for each namespace and then explicitly allow only necessary traffic. This aligns with the Cisco SAFE architectural principle of micro-segmentation. While RoleBinding (Option B) manages permissions for the Kubernetes API (who can create or delete pods), it does not control the actual network traffic between those pods. HTTPRoute (Option A) and Gateway (Option D) are components of the Kubernetes Gateway API used for managing external traffic routing and load balancing, rather than internal pod-to-pod isolation. By deploying NetworkPolicies, the administrator ensures that the 'blast radius' of a compromised application is contained within its own namespace, fulfilling a core objective of securing cloud-native application infrastructure.

========

A telecommunications company recently introduced a hybrid working model. Based on the new policy, employees can work remotely for 2 days per week if corporate equipment is used. The IT department is preparing corporate laptops to support users during the remote working days. Which solution must the IT department implement that provides secure connectivity to corporate resources and protects sensitive corporate data even if a laptop is stolen?

Answer : A

The Cisco Secure Client (formerly AnyConnect) is the comprehensive solution designed to handle the complexities of a hybrid workforce. To meet the company's requirements, Secure Client provides a secure VPN tunnel (SSL or IPsec) that ensures all traffic between the remote laptop and corporate resources is encrypted and authenticated.

Critically, for the scenario where a laptop is stolen, Secure Client integrates with various endpoint security modules. While it primarily handles secure connectivity, it is the platform that hosts features like Always-On VPN and management of disk encryption status. According to Cisco Security Infrastructure design principles, Secure Client acts as the unified agent on the endpoint that maintains the security posture and connectivity regardless of the user's location.

While Cisco Duo (Option B) provides essential Multi-Factor Authentication (MFA) to verify the user's identity, it does not provide the encrypted tunnel for data transit. ISE Posture (Option C) is a feature (often delivered via Secure Client) that checks the health of the device but doesn't provide the connectivity itself. Umbrella (Option D) protects the user from malicious sites and provides a roaming client for DNS/web security, but it does not replace the requirement for a secure tunnel to private corporate resources. Therefore, Secure Client is the holistic solution that bridges the gap between the remote user and the corporate data center while ensuring that the device remains under the organization's security umbrella.

A financial company is focused on proactively protecting sensitive data stored on the devices. The company recognizes the potential risks associated with lost or stolen devices and they want a solution to ensure that if unauthorized user access the device, the data it contains is not accessible or misused. The solution includes implementing a strategy that renders data unreadable without user authentication. Which solution meets the requirement?

Answer : C

For a financial company, protecting 'data at rest' is a critical requirement of the Cisco Security Infrastructure blueprint. While physical security and BIOS-level protections have their place, Data encryption on disk (such as BitLocker, FileVault, or hardware-encrypted drives) is the only solution that fulfills the requirement of rendering the actual data unreadable if the device is lost or stolen.

Disk encryption uses cryptographic algorithms to transform readable data into ciphertext. Without the correct decryption key---which is typically released only after successful user authentication---the data remains a meaningless string of characters even if the hard drive is removed and connected to a different machine. A Kensington Lock (Option A) is a physical deterrent to prevent theft but does not protect the data if the lock is cut or the device is stolen. A BIOS password (Option B) can prevent the OS from booting but does not stop an attacker from reading the data directly from the storage media. GPS tracking (Option D) helps in recovery but does not prevent unauthorized data access in the interim. Implementing full-disk encryption aligns with the Cisco SAFE principle of pervasive data protection and ensures compliance with financial regulations regarding the safeguarding of sensitive client information on mobile endpoints.

========

After deploying a new API, the security team must identify the components of the application that are exposed to the internet and whether there are application authentication risks. Which technology must be deployed to discover the applications services and monitor for authentication issues?

Answer : B

Securing APIs requires visibility into the 'runtime' behavior of the application. API trace analysis (often part of an API Security solution like Cisco Panoptica) is the technology used to automatically discover API endpoints and analyze the traffic flowing through them. This process identifies 'shadow APIs' (undocumented endpoints) that are exposed to the internet and inspects the headers and payloads for authentication risks, such as missing tokens or broken object-level authorization (BOLA).

By monitoring actual traffic traces, the security team can confirm if the API is following the intended security design or if it is leaking sensitive data due to poor authentication implementation. Cloud Security Posture Management (CSPM) (Option A) focuses on the configuration of the cloud infrastructure (like an open S3 bucket) rather than the internal logic of an API's authentication. Secret scanning (Option C) is a 'shift-left' technique used to find hardcoded passwords in source code during the build phase, not for monitoring live traffic. Cloud Workload Protection (CWPP) (Option D) focuses on protecting the underlying host or container from malware and exploits. Only API trace analysis provides the specific visibility into service discovery and application-layer authentication health required in the Cisco SDSI v1.0 objectives for modern DevSecOps environments.

Which tool must be used to prioritize incidents by a SOC?

Answer : A

A Security Operations Center (SOC) is often overwhelmed by thousands of alerts from various security tools. The primary tool used to aggregate, correlate, and---most importantly---prioritize these incidents is the Security Information and Event Management (SIEM) system. According to the Cisco SDSI domain on Risk, Events, and Requirements, a SIEM acts as the central brain of the SOC.

A SIEM (such as Splunk or Cisco Secure Cloud Analytics) ingests logs from firewalls, endpoints, and cloud services. It uses correlation rules and risk-scoring algorithms to distinguish between low-priority 'noise' and critical security incidents. For example, a single failed login might be ignored, but ten failed logins followed by a successful one and a large data transfer would be escalated as a high-priority incident. Endpoint Detection and Response (EDR) (Option B) and Endpoint Protection Platforms (EPP) (Option D) provide deep visibility and protection on individual hosts but lack the cross-platform correlation needed to prioritize organizational risk. CloudWatch (Option C) is a monitoring service for AWS resources but does not function as a multi-source security correlation engine. By using a SIEM, SOC analysts can focus their limited time on the most impactful threats, ensuring a more efficient and effective incident response process.

========