Databricks Certified Associate Developer for Apache Spark 3.5 - Python Databricks Certified Associate Developer for Apache Spark 3.5 Exam Questions

A Spark engineer must select an appropriate deployment mode for the Spark jobs.

What is the benefit of using cluster mode in Apache Spark?

Answer : D

In Apache Spark's cluster mode:

'The driver program runs on the cluster's worker node instead of the client's local machine. This allows the driver to be close to the data and other executors, reducing network overhead and improving fault tolerance for production jobs.' (Source: Apache Spark documentation -- Cluster Mode Overview)

'The driver program runs on the cluster's worker node instead of the client's local machine. This allows the driver to be close to the data and other executors, reducing network overhead and improving fault tolerance for production jobs.' (Source: Apache Spark documentation -- Cluster Mode Overview)

This deployment is ideal for production environments where the job is submitted from a gateway node, and Spark manages the driver lifecycle on the cluster itself.

Option A is partially true but less specific than D.

Option B is incorrect: the driver never executes all tasks; executors handle distributed tasks.

Option C describes client mode, not cluster mode.

A Data Analyst needs to retrieve employees with 5 or more years of tenure.

Which code snippet filters and shows the list?

Answer : A

To filter rows based on a condition and display them in Spark, use filter(...).show():

employees_df.filter(employees_df.tenure >= 5).show()

Option A is correct and shows the results.

Option B filters but doesn't display them.

Option C uses Python's built-in filter, not Spark.

Option D collects the results to the driver, which is unnecessary if .show() is sufficient.

Final Answer: A

What is the benefit of using Pandas on Spark for data transformations?

Options:

Answer : D

Pandas API on Spark (formerly Koalas) offers:

Familiar Pandas-like syntax

Distributed execution using Spark under the hood

Scalability for large datasets across the cluster

It provides the power of Spark while retaining the productivity of Pandas.

A data engineer is running a Spark job to process a dataset of 1 TB stored in distributed storage. The cluster has 10 nodes, each with 16 CPUs. Spark UI shows:

Low number of Active Tasks

Many tasks complete in milliseconds

Fewer tasks than available CPUs

Which approach should be used to adjust the partitioning for optimal resource allocation?

Answer : D

Spark's best practice is to estimate partition count based on data volume and a reasonable partition size --- typically 128 MB to 256 MB per partition.

With 1 TB of data: 1 TB / 128 MB ~8000 partitions

This ensures that tasks are distributed across available CPUs for parallelism and that each task processes an optimal volume of data.

Option A (equal to cores) may result in partitions that are too large.

Option B (fixed 200) is arbitrary and may underutilize the cluster.

Option C (nodes) gives too few partitions (10), limiting parallelism.

Which configuration can be enabled to optimize the conversion between Pandas and PySpark DataFrames using Apache Arrow?

Answer : B

Apache Arrow is used under the hood to optimize conversion between Pandas and PySpark DataFrames. The correct configuration setting is:

spark.conf.set('spark.sql.execution.arrow.pyspark.enabled', 'true')

From the official documentation:

''This configuration must be enabled to allow for vectorized execution and efficient conversion between Pandas and PySpark using Arrow.''

Option B is correct.

Options A, C, and D are invalid config keys and not recognized by Spark.

Final Answer: B

A data scientist wants each record in the DataFrame to contain:

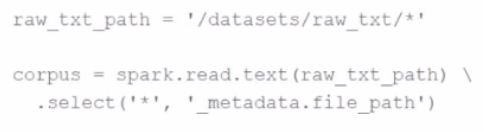

The first attempt at the code does read the text files but each record contains a single line. This code is shown below:

The entire contents of a file

The full file path

The issue: reading line-by-line rather than full text per file.

Code:

corpus = spark.read.text("/datasets/raw_txt/*") \

.select('*', '_metadata.file_path')

Which change will ensure one record per file?

Options:

Answer : A

To read each file as a single record, use:

spark.read.text(path, wholetext=True)

This ensures that Spark reads the entire file contents into one row.

26 of 55. A data scientist at an e-commerce company is working with user data obtained from its subscriber database and has stored the data in a DataFrame df_user.

Before further processing, the data scientist wants to create another DataFrame df_user_non_pii and store only the non-PII columns. The PII columns in df_user are name, email, and birthdate.

Which code snippet can be used to meet this requirement?

A.

df_user_non_pii = df_user.drop("name", "email", "birthdate")

B.

df_user_non_pii = df_user.dropFields("name", "email", "birthdate")

C.

df_user_non_pii = df_user.select("name", "email", "birthdate")

D.

df_user_non_pii = df_user.remove("name", "email", "birthdate")

Answer : A

To exclude sensitive (PII) columns from a DataFrame, the easiest method is to use the .drop() function with the list of column names to remove.

Correct syntax:

df_user_non_pii = df_user.drop('name', 'email', 'birthdate')

This creates a new DataFrame containing all remaining columns.

Why the other options are incorrect:

B: .dropFields() is not valid for standard DataFrames --- it's used for struct fields only.

C: .select() would keep only PII columns, not remove them.

D: .remove() does not exist in Spark DataFrame API.

PySpark DataFrame API --- drop() method for removing multiple columns.

Databricks Exam Guide (June 2025): Section ''Developing Apache Spark DataFrame/DataSet API Applications'' --- data manipulation, selecting, and dropping columns.