Google Cloud Associate Data Practitioner Exam Questions

You are building a batch data pipeline to process 100 GB of structured data from multiple sources for daily reporting. You need to transform and standardize the data prior to loading the data to ensure that it is stored in a single dataset. You want to use a low-code solution that can be easily built and managed. What should you do?

Answer : B

Comprehensive and Detailed in Depth

Why B is correct:Cloud Data Fusion is a fully managed, cloud-native data integration service for building and managing ETL/ELT data pipelines.

It provides a graphical interface for building pipelines without coding, making it a low-code solution.

Cloud data fusion is perfect for the ingestion, transformation and loading of data into BigQuery.

Why other options are incorrect:A: Looker studio is for visualization, not data transformation.

C: Cloud SQL is a relational database, not ideal for large-scale analytical data.

D: Cloud run is for stateless applications, not batch data processing.

Cloud Data Fusion: https://cloud.google.com/data-fusion/docs

Your team needs to analyze large datasets stored in BigQuery to identify trends in user behavior. The analysis will involve complex statistical calculations, Python packages, and visualizations. You need to recommend a managed collaborative environment to develop and share the analysis. What should you recommend?

Answer : A

Using a Colab Enterprise notebook connected to BigQuery provides a managed, collaborative environment ideal for complex statistical calculations, Python packages, and visualizations. Colab Enterprise supports Python libraries for advanced analytics and offers seamless integration with BigQuery for querying large datasets. It allows teams to collaboratively develop and share analyses while taking advantage of its visualization capabilities. This approach is particularly suitable for tasks involving sophisticated computations and custom visualizations.

Your team uses Google Sheets to track budget data that is updated daily. The team wants to compare budget data against actual cost data, which is stored in a BigQuery table. You need to create a solution that calculates the difference between each day's budget and actual costs. You want to ensure that your team has access to daily-updated results in Google Sheets. What should you do?

Answer : D

Comprehensive and Detailed in Depth

Why D is correct:Creating a BigQuery external table directly from the Google Sheet allows for real-time updates.

Joining the external table with the actual cost table in BigQuery performs the calculation.

Connected Sheets allows the team to access and analyze the results directly in Google Sheets, with the data being updated.

Why other options are incorrect:A: Saving as a CSV file loses the live connection and daily updates.

B: Downloading and uploading as a CSV file adds unnecessary steps and loses the live connection.

C: Same issue as B, losing the live connection.

BigQuery External Tables: https://cloud.google.com/bigquery/docs/external-tables

Connected Sheets: https://support.google.com/sheets/answer/9054368?hl=en

Your retail company wants to predict customer churn using historical purchase data stored in BigQuery. The dataset includes customer demographics, purchase history, and a label indicating whether the customer churned or not. You want to build a machine learning model to identify customers at risk of churning. You need to create and train a logistic regression model for predicting customer churn, using the customer_data table with the churned column as the target label. Which BigQuery ML query should you use?

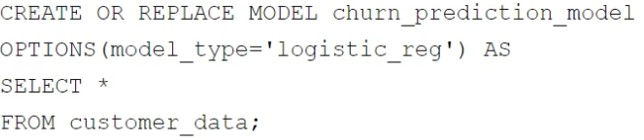

A)

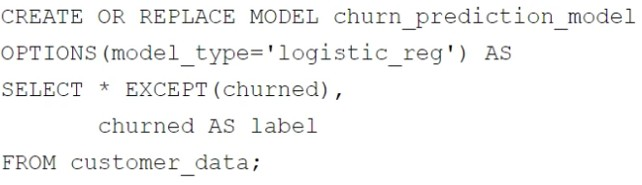

B)

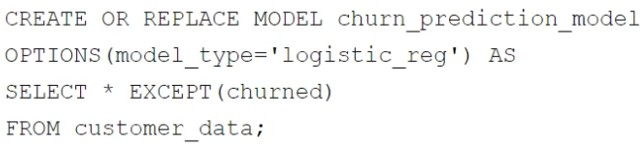

C)

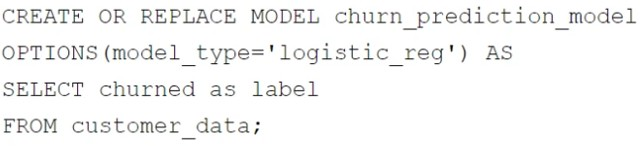

D)

Answer : B

In BigQuery ML, when creating a logistic regression model to predict customer churn, the correct query should:

Exclude the target label column (in this case, churned) from the feature columns, as it is used for training and not as a feature input.

Rename the target label column to label, as BigQuery ML requires the target column to be named label.

The chosen query satisfies these requirements:

SELECT * EXCEPT(churned), churned AS label: Excludes churned from features and renames it to label.

The OPTIONS(model_type='logistic_reg') specifies that a logistic regression model is being trained.

This setup ensures the model is correctly trained using the features in the dataset while targeting the churned column for predictions.

Your company's customer support audio files are stored in a Cloud Storage bucket. You plan to analyze the audio files' metadata and file content within BigQuery to create inference by using BigQuery ML. You need to create a corresponding table in BigQuery that represents the bucket containing the audio files. What should you do?

Answer : D

To analyze audio files stored in a Cloud Storage bucket and represent them in BigQuery, you should create an object table. Object tables in BigQuery are designed to represent objects stored in Cloud Storage, including their metadata. This enables you to query the metadata of audio files directly from BigQuery without duplicating the data. Once the object table is created, you can use it in conjunction with other BigQuery ML workflows for inference and analysis.

You are working with a large dataset of customer reviews stored in Cloud Storage. The dataset contains several inconsistencies, such as missing values, incorrect data types, and duplicate entries. You need to clean the data to ensure that it is accurate and consistent before using it for analysis. What should you do?

Answer : B

Using BigQuery to batch load the data and perform cleaning and analysis with SQL is the best approach for this scenario. BigQuery provides powerful SQL capabilities to handle missing values, enforce correct data types, and remove duplicates efficiently. This method simplifies the pipeline by leveraging BigQuery's built-in processing power for both cleaning and analysis, reducing the need for additional tools or services and minimizing complexity.

Your team is building several data pipelines that contain a collection of complex tasks and dependencies that you want to execute on a schedule, in a specific order. The tasks and dependencies consist of files in Cloud Storage, Apache Spark jobs, and data in BigQuery. You need to design a system that can schedule and automate these data processing tasks using a fully managed approach. What should you do?

Answer : C

Using Cloud Composer to create Directed Acyclic Graphs (DAGs) is the best solution because it is a fully managed, scalable workflow orchestration service based on Apache Airflow. Cloud Composer allows you to define complex task dependencies and schedules while integrating seamlessly with Google Cloud services such as Cloud Storage, BigQuery, and Dataproc for Apache Spark jobs. This approach minimizes operational overhead, supports scheduling and automation, and provides an efficient and fully managed way to orchestrate your data pipelines.

Extract from Google Documentation: From 'Cloud Composer Overview' (https://cloud.google.com/composer/docs): 'Cloud Composer is a fully managed workflow orchestration service built on Apache Airflow, enabling you to schedule and automate complex data pipelines with dependencies across Google Cloud services like Cloud Storage, Dataproc, and BigQuery.' Reference: Google Cloud Documentation - 'Cloud Composer' (https://cloud.google.com/composer).