Google Professional Machine Learning Engineer Exam Questions

You are developing models to classify customer support emails. You created models with TensorFlow Estimators using small datasets on your on-premises system, but you now need to train the models using large datasets to ensure high performance. You will port your models to Google Cloud and want to minimize code refactoring and infrastructure overhead for easier migration from on-prem to cloud. What should you do?

Answer : A

Vertex AI Platform is a unified platform for building and deploying ML models on Google Cloud. It supports both custom and AutoML models, and provides various tools and services for ML development, such as Vertex Pipelines, Vertex Vizier, Vertex Explainable AI, and Vertex Feature Store. Vertex AI Platform allows users to train their TensorFlow models using distributed training, which can speed up the training process and handle large datasets. Vertex AI Platform also minimizes code refactoring and infrastructure overhead, as it is compatible with TensorFlow Estimators and handles the provisioning, configuration, and scaling of the training resources automatically. The other options are not as suitable for this scenario. Dataproc is a service that allows users to create and run data processing pipelines using Apache Spark and Hadoop, but it is not designed for TensorFlow model training. Managed Instance Groups are a feature that allows users to create and manage groups of identical compute instances, but they require more configuration and management than Vertex AI Platform. Kubeflow Pipelines are a tool that allows users to create and run ML workflows on Google Kubernetes Engine, but they involve more complexity and code changes than Vertex AI Platform.Reference:

Vertex AI Platform documentation

Distributed training with Vertex AI Platform

You have a functioning end-to-end ML pipeline that involves tuning the hyperparameters of your ML model using Al Platform, and then using the best-tuned parameters for training. Hypertuning is taking longer than expected and is delaying the downstream processes. You want to speed up the tuning job without significantly compromising its effectiveness. Which actions should you take?

Choose 2 answers

Answer : C, E

Hyperparameter tuning is the process of finding the optimal values for the parameters of a machine learning model that affect its performance. AI Platform provides a service for hyperparameter tuning that can run multiple trials in parallel and use different search algorithms to find the best combination of hyperparameters. However, hyperparameter tuning can be time-consuming and costly, especially if the search space is large and the model training is complex. Therefore, it is important to optimize the tuning job to reduce the time and resources required.

One way to speed up the tuning job is to set the early stopping parameter to TRUE. This means that the tuning service will automatically stop trials that are unlikely to perform well based on the intermediate results. This can save time and resources by avoiding unnecessary computations for trials that are not promising. The early stopping parameter can be set in thetrainingInput.hyperparametersfield of the training job request1

Another way to speed up the tuning job is to decrease the maximum number of trials during subsequent training phases. This means that the tuning service will use fewer trials to refine the search space after the initial phase. This can reduce the time required for the tuning job to converge to the optimal solution. The maximum number of trials can be set in thetrainingInput.hyperparameters.maxTrialsfield of the training job request1

The other options are not effective ways to speed up the tuning job. Decreasing the number of parallel trials will reduce the concurrency of the tuning job and increase the overall time required. Decreasing the range of floating-point values will reduce the diversity of the search space and may miss some optimal solutions.Changing the search algorithm from Bayesian search to random search will reduce the efficiency of the tuning job and may require more trials to find the best solution1

References:1:Hyperparameter tuning overview

You have been asked to build a model using a dataset that is stored in a medium-sized (~10GB) BigQuery table. You need to quickly determine whether this data is suitable for model development. You want to create a one-time report that includes both informative visualizations of data distributions and more sophisticated statistical analyses to share with other ML engineers on your team. You require maximum flexibility to create your report. What should you do?

Answer : A

Option A is correct because using Vertex AI Workbench user-managed notebooks to generate the report is the best way to quickly determine whether the data is suitable for model development, and to create a one-time report that includes both informative visualizations of data distributions and more sophisticated statistical analyses to share with other ML engineers on your team. Vertex AI Workbench is a service that allows you to create and use notebooks for ML development and experimentation. You can use Vertex AI Workbench to connect to your BigQuery table, query and analyze the data using SQL or Python, and create interactive charts and plots using libraries such as pandas, matplotlib, or seaborn. You can also use Vertex AI Workbench to perform more advanced data analysis, such as outlier detection, feature engineering, or hypothesis testing, using libraries such as TensorFlow Data Validation, TensorFlow Transform, or SciPy. You can export your notebook as a PDF or HTML file, and share it with your team. Vertex AI Workbench provides maximum flexibility to create your report, as you can use any code or library that you want, and customize the report as you wish.

Option B is incorrect because using Google Data Studio to create the report is not the most flexible way to quickly determine whether the data is suitable for model development, and to create a one-time report that includes both informative visualizations of data distributions and more sophisticated statistical analyses to share with other ML engineers on your team. Google Data Studio is a service that allows you to create and share interactive dashboards and reports using data from various sources, such as BigQuery, Google Sheets, or Google Analytics. You can use Google Data Studio to connect to your BigQuery table, explore and visualize the data using charts, tables, or maps, and apply filters, calculations, or aggregations to the data. However, Google Data Studio does not support more sophisticated statistical analyses, such as outlier detection, feature engineering, or hypothesis testing, which may be useful for model development. Moreover, Google Data Studio is more suitable for creating recurring reports that need to be updated frequently, rather than one-time reports that are static.

Option C is incorrect because using the output from TensorFlow Data Validation on Dataflow to generate the report is not the most efficient way to quickly determine whether the data is suitable for model development, and to create a one-time report that includes both informative visualizations of data distributions and more sophisticated statistical analyses to share with other ML engineers on your team. TensorFlow Data Validation is a library that allows you to explore, validate, and monitor the quality of your data for ML. You can use TensorFlow Data Validation to compute descriptive statistics, detect anomalies, infer schemas, and generate data visualizations for your data. Dataflow is a service that allows you to create and run scalable data processing pipelines using Apache Beam. You can use Dataflow to run TensorFlow Data Validation on large datasets, such as those stored in BigQuery. However, this option is not very efficient, as it involves moving the data from BigQuery to Dataflow, creating and running the pipeline, and exporting the results. Moreover, this option does not provide maximum flexibility to create your report, as you are limited by the functionalities of TensorFlow Data Validation, and you may not be able to customize the report as you wish.

Option D is incorrect because using Dataprep to create the report is not the most flexible way to quickly determine whether the data is suitable for model development, and to create a one-time report that includes both informative visualizations of data distributions and more sophisticated statistical analyses to share with other ML engineers on your team. Dataprep is a service that allows you to explore, clean, and transform your data for analysis or ML. You can use Dataprep to connect to your BigQuery table, inspect and profile the data using histograms, charts, or summary statistics, and apply transformations, such as filtering, joining, splitting, or aggregating, to the data. However, Dataprep does not support more sophisticated statistical analyses, such as outlier detection, feature engineering, or hypothesis testing, which may be useful for model development. Moreover, Dataprep is more suitable for creating data preparation workflows that need to be executed repeatedly, rather than one-time reports that are static.

Vertex AI Workbench documentation

Google Data Studio documentation

TensorFlow Data Validation documentation

Dataflow documentation

Dataprep documentation

[BigQuery documentation]

[pandas documentation]

[matplotlib documentation]

[seaborn documentation]

[TensorFlow Transform documentation]

[SciPy documentation]

[Apache Beam documentation]

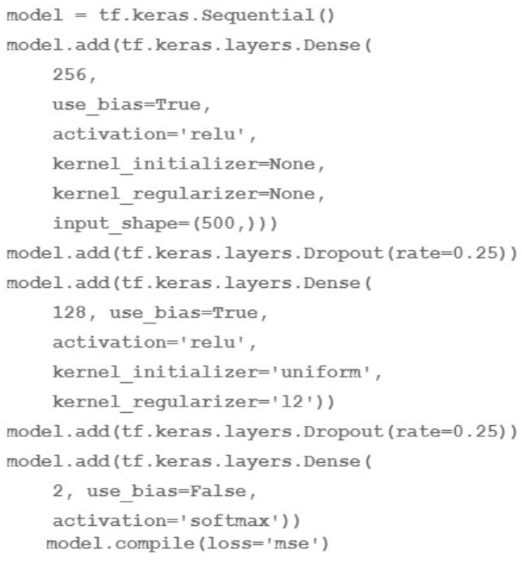

You are going to train a DNN regression model with Keras APIs using this code:

How many trainable weights does your model have? (The arithmetic below is correct.)

Answer : B

The number of trainable weights in a DNN regression model with Keras APIs can be calculated by multiplying the number of input units by the number of output units for each layer, and adding the number of bias units for each layer.The bias units are usually equal to the number of output units, except for the last layer, which does not have bias units if the activation function is softmax1. In this code, the model has three layers: a dense layer with 256 units and relu activation, a dropout layer with 0.25 rate, and a dense layer with 2 units and softmax activation. The input shape is 500. Therefore, the number of trainable weights is:

For the first layer: 500 input units * 256 output units + 256 bias units 128256

For the second layer: The dropout layer does not have any trainable weights, as it only randomly sets some of the input units to zero to prevent overfitting2.

For the third layer: 256 input units * 2 output units + 0 bias units 512

The total number of trainable weights is 128256 + 512 161024. Therefore, the correct answer is B.

How to calculate the number of parameters for a Convolutional Neural Network?

Dropout (keras.io)

Your data science team is training a PyTorch model for image classification based on a pre-trained RestNet model. You need to perform hyperparameter tuning to optimize for several parameters. What should you do?

Answer : B

AI Platform supports hyperparameter tuning for PyTorch models using custom containers. This allows you to use any Python dependencies and libraries that are not included in the pre-built AI Platform Training runtime versions. You can also use a pre-trained model such as ResNet as a base for your custom model. To run a hyperparameter tuning job on AI Platform using custom containers, you need to do the following steps:

Create a Dockerfile that defines the container image for your training application. The Dockerfile should install PyTorch and any other dependencies, copy your training code and configuration files, and set the entrypoint for the container.

Build the container image and push it to Container Registry or another accessible registry.

Create a YAML file that defines the configuration for your hyperparameter tuning job. The YAML file should specify the container image URI, the training input and output paths, the hyperparameters to tune, the metric to optimize, and the tuning algorithm and budget.

Submit the hyperparameter tuning job to AI Platform using the gcloud command-line tool or the AI Platform Training API.

Hyperparameter tuning overview

Using custom containers

PyTorch on AI Platform Training

You are an ML engineer at an ecommerce company and have been tasked with building a model that predicts how much inventory the logistics team should order each month. Which approach should you take?

Answer : C

The best approach to build a model that predicts how much inventory the logistics team should order each month is to use a time series forecasting model to predict each item's monthly sales. This approach can capture the temporal patterns and trends in the sales data, such as seasonality, cyclicality, and autocorrelation. It can also account for the variability and uncertainty in the demand, and provide confidence intervals and error metrics for the predictions. By using a time series forecasting model, you can provide the logistics team with accurate and reliable estimates of the future sales for each item, which can help them optimize the inventory levels and avoid overstocking or understocking. You can use various methods and tools to build a time series forecasting model, such as ARIMA, LSTM, Prophet, or BigQuery ML.

The other options are not optimal for the following reasons:

A . Using a clustering algorithm to group popular items together is not a good approach, as it does not provide any quantitative or temporal information about the sales or the inventory. It only provides a qualitative and static categorization of the items based on their similarity or dissimilarity. Moreover, clustering is an unsupervised learning technique, which does not use any target variable or feedback to guide the learning process. This can result in arbitrary and inconsistent clusters, which may not reflect the true demand or preferences of the customers.

B . Using a regression model to predict how much additional inventory should be purchased each month is not a good approach, as it does not account for the individual differences and dynamics of each item. It only provides a single aggregated value for the whole inventory, which can be misleading and inaccurate. Moreover, a regression model is not well-suited for handling time series data, as it assumes that the data points are independent and identically distributed, which is not the case for sales data. A regression model can also suffer from overfitting or underfitting, depending on the choice and complexity of the features and the model.

D . Using a classification model to classify inventory levels as UNDER_STOCKED, OVER_STOCKED, and CORRECTLY_STOCKED is not a good approach, as it does not provide any numerical or predictive information about the sales or the inventory. It only provides a discrete and subjective label for the inventory levels, which can be vague and ambiguous. Moreover, a classification model is not well-suited for handling time series data, as it assumes that the data points are independent and identically distributed, which is not the case for sales data. A classification model can also suffer from class imbalance, misclassification, or overfitting, depending on the choice and complexity of the features, the model, and the threshold.

Professional ML Engineer Exam Guide

Preparing for Google Cloud Certification: Machine Learning Engineer Professional Certificate

Google Cloud launches machine learning engineer certification

Time Series Forecasting: Principles and Practice

BigQuery ML: Time series analysis

You are an ML engineer at a manufacturing company You are creating a classification model for a predictive maintenance use case You need to predict whether a crucial machine will fail in the next three days so that the repair crew has enough time to fix the machine before it breaks. Regular maintenance of the machine is relatively inexpensive, but a failure would be very costly You have trained several binary classifiers to predict whether the machine will fail. where a prediction of 1 means that the ML model predicts a failure.

You are now evaluating each model on an evaluation dataset. You want to choose a model that prioritizes detection while ensuring that more than 50% of the maintenance jobs triggered by your model address an imminent machine failure. Which model should you choose?

Answer : C

The best option for choosing a model that prioritizes detection while ensuring that more than 50% of the maintenance jobs triggered by the model address an imminent machine failure is to choose the model with the highest recall where precision is greater than 0.5. This option has the following advantages:

It maximizes the recall, which is the proportion of actual failures that are correctly predicted by the model. Recall is also known as sensitivity or true positive rate (TPR), and it is calculated as:

mathrmRecallfracmathrmTPmathrmTP+mathrmFN

where TP is the number of true positives (actual failures that are predicted as failures) and FN is the number of false negatives (actual failures that are predicted as non-failures). By maximizing the recall, the model can reduce the number of false negatives, which are the most costly and undesirable outcomes for the predictive maintenance use case, as they represent missed failures that can lead to machine breakdown and downtime.

It constrains the precision, which is the proportion of predicted failures that are actual failures. Precision is also known as positive predictive value (PPV), and it is calculated as:

mathrmPrecisionfracmathrmTPmathrmTP+mathrmFP

where FP is the number of false positives (actual non-failures that are predicted as failures). By constraining the precision to be greater than 0.5, the model can ensure that more than 50% of the maintenance jobs triggered by the model address an imminent machine failure, which can avoid unnecessary or wasteful maintenance costs.

The other options are less optimal for the following reasons:

Option A: Choosing the model with the highest area under the receiver operating characteristic curve (AUC ROC) and precision greater than 0.5 may not prioritize detection, as the AUC ROC does not directly measure the recall. The AUC ROC is a summary metric that evaluates the overall performance of a binary classifier across all possible thresholds. The ROC curve plots the TPR (recall) against the false positive rate (FPR), which is the proportion of actual non-failures that are incorrectly predicted by the model. The AUC ROC is the area under the ROC curve, and it ranges from 0 to 1, where 1 represents a perfect classifier. However, choosing the model with the highest AUC ROC may not maximize the recall, as the AUC ROC is influenced by both the TPR and the FPR, and it does not account for the precision or the specificity (the proportion of actual non-failures that are correctly predicted by the model).

Option B: Choosing the model with the lowest root mean squared error (RMSE) and recall greater than 0.5 may not prioritize detection, as the RMSE is not a suitable metric for binary classification. The RMSE is a regression metric that measures the average magnitude of the error between the predicted and the actual values. The RMSE is calculated as:

mathrmRMSEsqrtfrac1nsumi1n(yihatyi)2

where yi is the actual value, hatyi is the predicted value, and n is the number of observations. However, choosing the model with the lowest RMSE may not optimize the detection of failures, as the RMSE is sensitive to outliers and does not account for the class imbalance or the cost of misclassification.

Option D: Choosing the model with the highest precision where recall is greater than 0.5 may not prioritize detection, as the precision may not be the most important metric for the predictive maintenance use case. The precision measures the accuracy of the positive predictions, but it does not reflect the sensitivity or the coverage of the model. By choosing the model with the highest precision, the model may sacrifice the recall, which is the proportion of actual failures that are correctly predicted by the model. This may increase the number of false negatives, which are the most costly and undesirable outcomes for the predictive maintenance use case, as they represent missed failures that can lead to machine breakdown and downtime.

Evaluation Metrics (Classifiers) - Stanford University

Evaluation of binary classifiers - Wikipedia

Predictive Maintenance: The greatest benefits and smart use cases