Microsoft Azure AI Fundamentals AI-900 Exam Questions

During the process of Machine Learning, when should you review evaluation metrics?

Answer : D

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials and the Microsoft Learn module ''Identify features of common machine learning types,'' the evaluation phase occurs after training and testing a machine learning model. Evaluation metrics are used to measure how well the model performs when applied to data it has not seen before (the validation data).

The machine learning workflow includes the following key steps:

Data Preparation -- Importing, cleaning, and transforming data.

Splitting the Data -- Dividing it into training and validation (or test) sets.

Model Training -- Using the training data to teach the model patterns or relationships.

Model Evaluation -- Assessing the trained model using the validation data and evaluation metrics such as accuracy, precision, recall, F1 score, and root mean square error (RMSE).

As stated in the AI-900 content, evaluation metrics are crucial after testing, as they help determine if the model is accurate enough or if it requires retraining with different parameters or algorithms.

A . After you clean the data incorrect, as metrics cannot be reviewed before training.

B . Before you train a model incorrect, since the model has not yet learned patterns.

C . Before you choose the type of model incorrect, as metrics depend on the model's output.

Therefore, the verified answer is D. After you test a model on the validation data, which is when you review evaluation metrics to determine model performance and readiness for deployment.

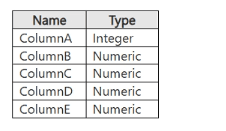

You have a dataset that contains the columns shown in the following table.

You have a machine learning model that predicts the value of ColumnE based on the other numeric columns.

Which type of model is this?

Answer : A

The dataset described contains numeric columns (ColumnA through ColumnE). The model's task is to predict the value of ColumnE based on the other numeric columns (A--D). This is a classic regression problem.

According to the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn module ''Identify common types of machine learning,'' a regression model is used when the target variable (the value to predict) is continuous and numeric, such as price, temperature, or---in this case---a numerical value in ColumnE.

Regression models analyze relationships between independent variables (inputs: Columns A--D) and a dependent variable (output: ColumnE) to predict a continuous outcome. Common regression algorithms include linear regression, decision tree regression, and neural network regression.

Option analysis:

A . Regression: Correct. Used for predicting numerical, continuous values.

B . Analysis: Incorrect. ''Analysis'' is a general term, not a machine learning model type.

C . Clustering: Incorrect. Clustering is unsupervised learning, grouping similar data points, not predicting values.

Therefore, the type of machine learning model used to predict ColumnE (a numeric value) from other numeric columns is Regression, which fits perfectly within Azure's supervised learning models.

A medical research project uses a large anonymized dataset of brain scan images that are categorized into predefined brain haemorrhage types.

You need to use machine learning to support early detection of the different brain haemorrhage types in the images before the images are reviewed by a person.

This is an example of which type of machine learning?

Answer : C

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module ''Identify features of classification machine learning'', classification is a supervised machine learning technique used when the output variable represents discrete categories or classes. In this case, the brain scan images are already labeled into predefined haemorrhage types, such as ''subarachnoid,'' ''epidural,'' or ''intraventricular.'' The model's goal is to learn patterns from labeled examples and then predict the correct class for new, unseen images.

The use of categorized brain scan images clearly indicates a supervised learning setup because both the input (image data) and output (haemorrhage type) are known during training. This aligns with Microsoft's definition: classification problems ''predict which category or class an item belongs to,'' often using algorithms such as logistic regression, decision trees, neural networks, or convolutional neural networks (CNNs) for image-based data.

In contrast:

A . Clustering is an unsupervised learning approach that groups data into clusters based on similarity when no predefined labels exist.

B . Regression predicts continuous numeric values (e.g., predicting age or temperature), not categories.

Because this project aims to automatically classify medical images into known diagnostic categories, it is a textbook example of classification.

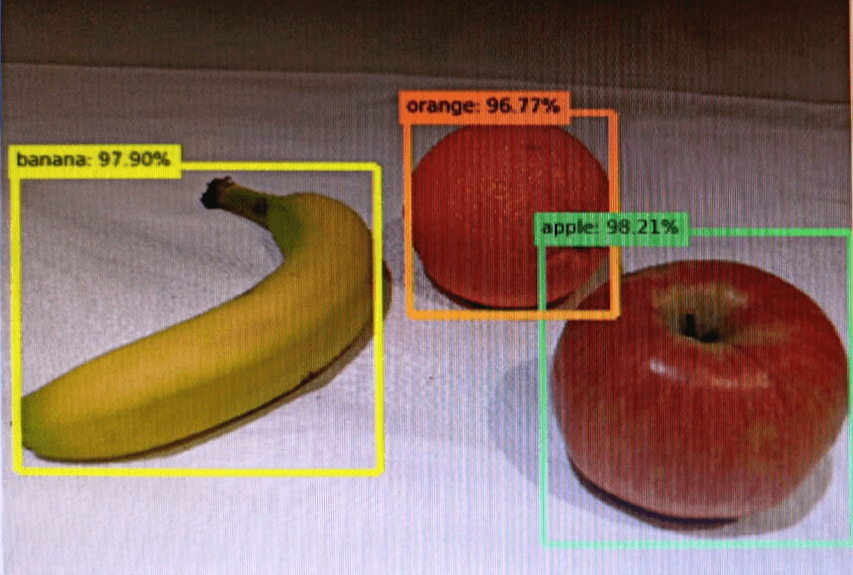

You send an image to a Computer Vision API and receive back the annotated image shown in the exhibit.

Which type of computer vision was used?

Answer : A

Object detection is similar to tagging, but the API returns the bounding box coordinates (in pixels) for each object found. For example, if an image contains a dog, cat and person, the Detect operation will list those objects together with their coordinates in the image. You can use this functionality to process the relationships between the objects in an image. It also lets you determine whether there are multiple instances of the same tag in an image.

The Detect API applies tags based on the objects or living things identified in the image. There is currently no formal relationship between the tagging taxonomy and the object detection taxonomy. At a conceptual level, the Detect API only finds objects and living things, while the Tag API can also include contextual terms like 'indoor', which can't be localized with bounding boxes.

https://docs.microsoft.com/en-us/azure/cognitive-services/computer-vision/concept-object-detection

Which natural language processing feature can be used to identify the main talking points in customer feedback surveys?

Answer : D

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module ''Explore natural language processing (NLP) in Azure'', key phrase extraction is a core feature of the Azure AI Language Service that enables you to automatically identify the most important ideas or topics discussed in a body of text.

When analyzing customer feedback surveys, key phrase extraction helps summarize the main talking points or recurring themes by detecting significant words and phrases. For instance, if multiple customers write comments like ''The checkout process is slow'' or ''Website speed could be improved,'' the model may extract key phrases such as ''checkout process'' and ''website speed.'' This allows businesses to quickly understand the most common subjects without manually reading each response.

Let's review the other options:

A . Language detection: Determines the language of the text (e.g., English, French, or Spanish) but does not identify main ideas.

B . Translation: Converts text from one language to another using Azure Translator; it does not summarize or extract key information.

C . Entity recognition: Identifies named entities such as people, organizations, locations, or dates. While useful for identifying specific details, it does not capture general topics or overall discussion points.

Therefore, the appropriate NLP feature for identifying main topics or themes within textual data such as survey responses is Key Phrase Extraction.

This capability is part of the Azure AI Language Service and is commonly used in sentiment analysis pipelines, customer feedback analytics, and business intelligence workflows to summarize large text datasets efficiently.

You are authoring a Language Understanding (LUIS) application to support a music festival.

You want users to be able to ask questions about scheduled shows, such as: ''Which act is playing on the main stage?''

The question ''Which act is playing on the main stage?'' is an example of which type of element?

Answer : B

In a Language Understanding (LUIS) application, an utterance represents an example of what a user might say to the bot. According to Microsoft Learn -- ''Build a Language Understanding app'', an utterance is a sample phrase that helps train the LUIS model to recognize user intent.

In the given example --- ''Which act is playing on the main stage?'' --- the statement is an utterance that a user might say to find out about show schedules. LUIS uses utterances like this to identify the intent (the user's goal, e.g., GetShowInfo) and to extract any entities (e.g., main stage) that provide additional details for fulfilling the request.

To clarify the other elements:

Intent: The overall purpose or action (e.g., ''FindShowDetails'').

Entity: Specific information in the utterance (e.g., ''main stage'').

Domain: A general subject area (e.g., entertainment, events).

Thus, ''Which act is playing on the main stage?'' is an utterance used to train the LUIS model to understand natural language input.

You need to convert receipts into transactions in a spreadsheet. The spreadsheet must include the date of the transaction, the merchant the total spent and any taxes paid.

Which Azure Al service should you use?

Answer : C

To extract structured data such as transaction date, merchant name, total amount, and taxes from receipts, the best service is Azure AI Document Intelligence (formerly known as Form Recognizer). As described in the Microsoft Learn module: ''Extract data from documents with Azure AI Document Intelligence'', this service applies optical character recognition (OCR) combined with machine learning models to identify and extract key-value pairs and tabular data from semi-structured documents like invoices, receipts, and forms.

The prebuilt receipt model of Document Intelligence can automatically recognize common receipt fields, including:

Merchant Name

Transaction Date

Total Amount

Taxes

Items Purchased

It outputs structured JSON that can easily be converted into spreadsheet or database entries. This capability eliminates the need for manual data entry, ensuring accuracy and efficiency in digitizing financial documents.

The other options are incorrect:

A . Face detects and verifies human faces but does not extract text or numerical data.

B . Azure AI Language analyzes text sentiment, key phrases, and entities but does not interpret scanned documents.

D . Azure AI Custom Vision is for training image classification or object detection models, not document data extraction.

Therefore, the most accurate and Microsoft-verified service for converting receipts into structured transactions in a spreadsheet is C. Azure AI Document Intelligence.