Microsoft Designing and Implementing a Data Science Solution on Azure DP-100 Exam Questions

You manage an Azure Machine Learning workspace

You build an Azure Machine Learning pipeline for image classification by using custom components. You need to define the interface, metadata, and code to execute components from a Python function. Which function should you use?

Answer : D

You have an Azure Machine Learning workspace.

You plan to use the workspace to set up automated machine learning training for an image classification model.

You need to choose the primary metric to optimize the model training.

Which primary metric should you choose?

Answer : C

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these

questions will not appear in the review screen.

You are creating a model to predict the price of a student's artwork depending on the following variables: the student's length of education, degree type, and art form.

You start by creating a linear regression model.

You need to evaluate the linear regression model.

Solution: Use the following metrics: Accuracy, Precision, Recall, F1 score and AUC.

Does the solution meet the goal?

Answer : B

Those are metrics for evaluating classification models, instead use: Mean Absolute Error, Root Mean Absolute Error, Relative Absolute Error, Relative Squared Error, and the Coefficient of Determination.

https://docs.microsoft.com/en-us/azure/machine-learning/studio-module-reference/evaluate-model

You are evaluating a completed binary classification machine learning model.

You need to use the precision as the valuation metric.

Which visualization should you use?

Answer : A

https://machinelearningknowledge.ai/confusion-matrix-and-performance-metrics-machine-learning/

You manage an Azure Machine Learning workspace. The development environment for managing the workspace is configured to use Python SDK v2 in Azure Machine Learning Notebooks.

A Synapse Spark Compute is currently attached and uses system-assigned identity.

You need to use Python code to update the Synapse Spark Compute to use a user-assigned identity.

Solution: Pass the UserAssignedldentity class object to the SynapseSparkCompute class.

Does the solution meet the goat?

Answer : B

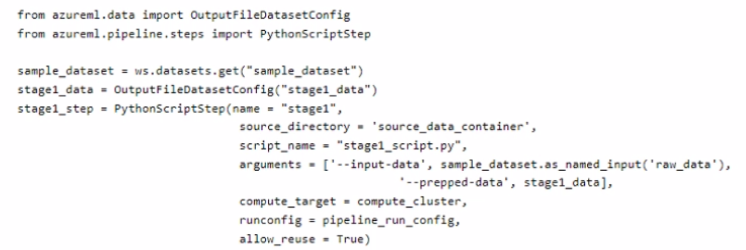

You create an Azure Machine Learning workspace. The workspace contains a dataset named sample.dataset, a compute instance, and a compute cluster. You must create a two-stage pipeline that will prepare data in the dataset and then train and register a model based on the prepared data. The first stage of the pipeline contains the following code:

You need to identify the location containing the output of the first stage of the script that you can use as input for the second stage. Which storage location should you use?

Answer : C

You train a model and register it in your Azure Machine Learning workspace. You are ready to deploy the model as a real-time web service.

You deploy the model to an Azure Kubernetes Service (AKS) inference cluster, but the deployment fails because an error occurs when the service runs the entry script that is associated with the model deployment.

You need to debug the error by iteratively modifying the code and reloading the service, without requiring a re-deployment of the service for each code update.

What should you do?

Answer : C

How to work around or solve common Docker deployment errors with Azure Container Instances (ACI) and Azure Kubernetes Service (AKS) using Azure Machine Learning.

The recommended and the most up to date approach for model deployment is via the Model.deploy() API using an Environment object as an input parameter. In this case our service will create a base docker image for you during deployment stage and mount the required models all in one call. The basic deployment tasks are:

1. Register the model in the workspace model registry.

2. Define Inference Configuration:

a. Create an Environment object based on the dependencies you specify in the environment yaml file or use one of our procured environments.

b. Create an inference configuration (InferenceConfig object) based on the environment and the scoring script.

3. Deploy the model to Azure Container Instance (ACI) service or to Azure Kubernetes Service (AKS).