Microsoft Administering Microsoft Azure SQL Solutions DP-300 Exam Questions

Which windowing function should you use to perform the streaming aggregation of the sales data?

Answer : D

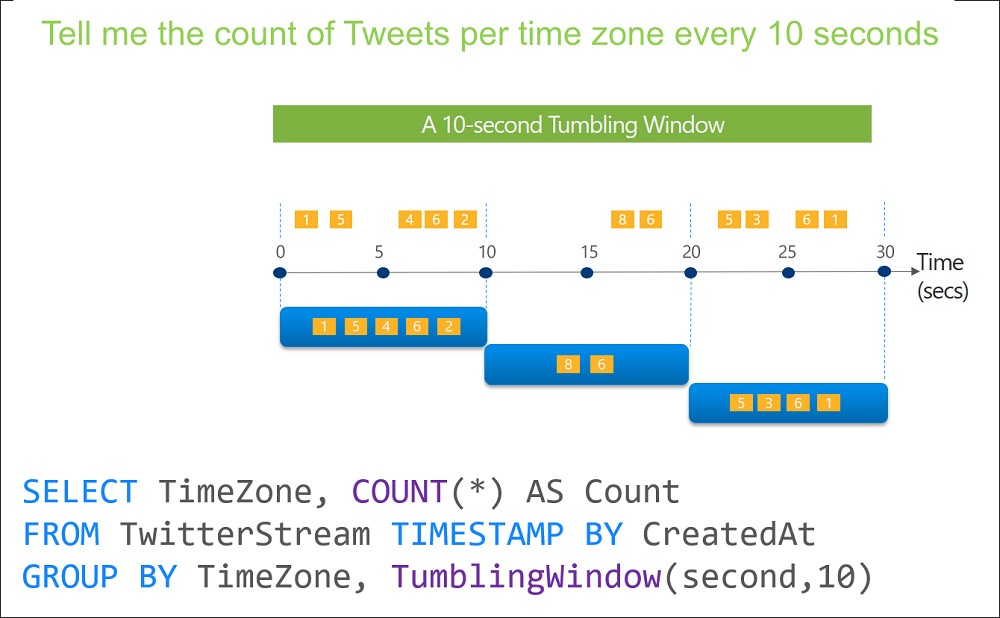

Scenario: The sales data, including the documents in JSON format, must be gathered as it arrives and analyzed online by using Azure Stream Analytics. The analytics process will perform aggregations that must be done continuously, without gaps, and without overlapping.

Tumbling window functions are used to segment a data stream into distinct time segments and perform a function against them, such as the example below. The key differentiators of a Tumbling window are that they repeat, do not overlap, and an event cannot belong to more than one tumbling window.

https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/stream-analytics/stream-analytics-window-functions.md

You need to recommend a solution to meet the security requirements and the business requirements for DB3. What should you recommend as the first step of the solution?

Answer : C

Topic 4, Contoso Ltd Clothing Store

You have an Azure SQL managed instance that hosts multiple databases.

You need to configure alerts for each database based on the diagnostics telemetry of the database.

What should you use?

Answer : D

https://docs.microsoft.com/en-us/azure/azure-sql/database/metrics-diagnostic-telemetry-logging-streaming-export-configure?tabs=azure-portal#configure-the-streaming-export-of-diagnostic-telemetry

You have an Azure SQL database.

You discover that the plan cache is full of compiled plans that were used only once.

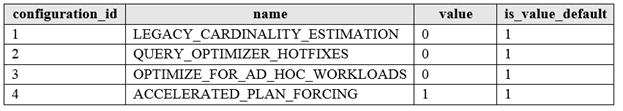

You run the select * from sys.database_scoped_configurations Transact-SQL command and receive the results shown in the following table.

You need relieve the memory pressure.

What should you configure?

Answer : C

OPTIMIZE_FOR_AD_HOC_WORKLOADS = { ON | OFF }

Enables or disables a compiled plan stub to be stored in cache when a batch is compiled for the first time. The default is OFF. Once the database scoped configuration OPTIMIZE_FOR_AD_HOC_WORKLOADS is enabled for a database, a compiled plan stub will be stored in cache when a batch is compiled for the first time. Plan stubs have a smaller memory footprint compared to the size of the full compiled plan.

Incorrect Answers:

A: LEGACY_CARDINALITY_ESTIMATION = { ON | OFF | PRIMARY }

Enables you to set the query optimizer cardinality estimation model to the SQL Server 2012 and earlier version independent of the compatibility level of the database. The default is OFF, which sets the query optimizer cardinality estimation model based on the compatibility level of the database.

B: QUERY_OPTIMIZER_HOTFIXES = { ON | OFF | PRIMARY }

Enables or disables query optimization hotfixes regardless of the compatibility level of the database. The default is OFF, which disables query optimization hotfixes that were released after the highest available compatibility level was introduced for a specific version (post-RTM).

https://docs.microsoft.com/en-us/sql/t-sql/statements/alter-database-scoped-configuration-transact-sql

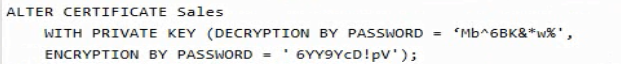

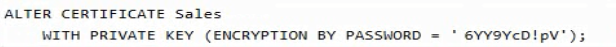

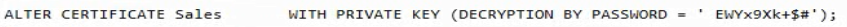

You have an Azure SQL database named DB1 that contains a private certificate named Sales. The private key for Sales is encrypted with a password. You need to change the password for the private key. Which Transact-SQL statement should you run?

A)

B)

C)

D)

Answer : C

You have an Azure Data Factory pipeline that performs an incremental load of source data to an Azure Data Lake Storage Gen2 account.

Data to be loaded is identified by a column named LastUpdatedDate in the source table.

You plan to execute the pipeline every four hours.

You need to ensure that the pipeline execution meets the following requirements:

Automatically retries the execution when the pipeline run fails due to concurrency or throttling limits.

Supports backfilling existing data in the table.

Which type of trigger should you use?

Answer : A

The Tumbling window trigger supports backfill scenarios. Pipeline runs can be scheduled for windows in the past.

https://docs.microsoft.com/en-us/azure/data-factory/concepts-pipeline-execution-triggers

What should you use to migrate the PostgreSQL database?

Answer : C

https://docs.microsoft.com/en-us/azure/dms/dms-overview