Microsoft Implementing Analytics Solutions Using Microsoft Fabric DP-600 Exam Questions

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have a Fabric tenant that contains a semantic model named Model1.

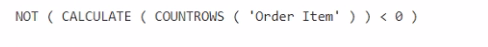

You discover that the following query performs slowly against Model1.

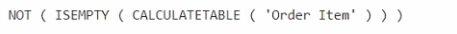

You need to reduce the execution time of the query.

Solution: You replace line 4 by using the following code:

Does this meet the goal?

Answer : B

You plan to deploy Microsoft Power BI items by using Fabric deployment pipelines. You have a deployment pipeline that contains three stages named Development, Test, and Production. A workspace is assigned to each stage.

You need to provide Power BI developers with access to the pipeline. The solution must meet the following requirements:

Ensure that the developers can deploy items to the workspaces for Development and Test.

Prevent the developers from deploying items to the workspace for Production.

Ensure that developers can view items in Production.

Follow the principle of least privilege.

Which three levels of access should you assign to the developers? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

Answer : B, D, E

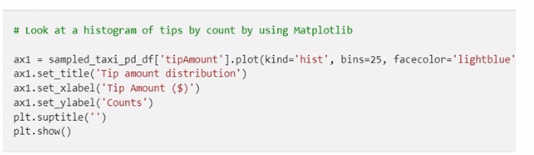

You have a Fabric notebook that has the Python code and output shown in the following exhibit.

Which type of analytics are you performing?

Answer : B

The Python code and output shown in the exhibit display a histogram, which is a representation of the distribution of data. This kind of analysis is descriptive analytics, which is used to describe or summarize the features of a dataset. Descriptive analytics answers the question of 'what has happened' by providing insight into past data through tools such as mean, median, mode, standard deviation, and graphical representations like histograms.

You have a Fabric tenant.

You are creating a Fabric Data Factory pipeline.

You have a stored procedure that returns the number of active customers and their average sales for the current month.

You need to add an activity that will execute the stored procedure in a warehouse. The returned values must be available to the downstream activities of the pipeline.

Which type of activity should you add?

Answer : A

In a Fabric Data Factory pipeline, to execute a stored procedure and make the returned values available for downstream activities, the Lookup activity is used. This activity can retrieve a dataset from a data store and pass it on for further processing. Here's how you would use the Lookup activity in this context:

Add a Lookup activity to your pipeline.

Configure the Lookup activity to use the stored procedure by providing the necessary SQL statement or stored procedure name.

In the settings, specify that the activity should use the stored procedure mode.

Once the stored procedure executes, the Lookup activity will capture the results and make them available in the pipeline's memory.

Downstream activities can then reference the output of the Lookup activity.

You have a Microsoft Power BI semantic model that contains a measure named TotalSalesAmount. TotalSalesAmount returns a sales revenue amount that is translated into a selected currency.

You need to ensure that the value returned by TotalSalesAmount is formatted to use the correct currency symbol.

What should you include in the solution?

Answer : A

Scenario:

Semantic model contains a measure: TotalSalesAmount.

This measure returns sales revenue translated into a selected currency.

Requirement: Format the result using the correct currency symbol dynamically.

Analysis:

Dynamic format string in DAX is specifically designed for applying formats (like currency symbols) dynamically based on slicers or calculation context.

WINDOW function used for windowing calculations, not formatting.

Linguistic schema used for Q&A natural language, not for formatting.

Field parameter used to swap fields/measures dynamically, not for formatting.

You have a Fabric tenant that uses a Microsoft tower Bl Premium capacity. You need to enable scale-out for a semantic model. What should you do first?

Answer : C

To enable scale-out for a semantic model, you should first set Large dataset storage format to On (C) at the semantic model level. This configuration is necessary to handle larger datasets effectively in a scaled-out environment. Reference = Guidance on configuring large dataset storage formats for scale-out is available in the Power BI documentation.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

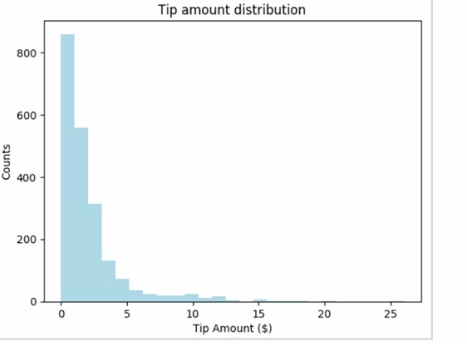

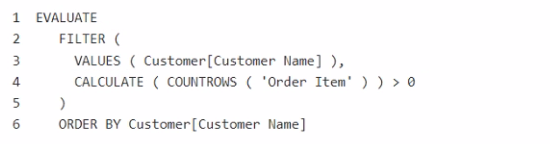

You have a Fabric tenant that contains a semantic model named Model1.

You discover that the following query performs slowly against Model1.

You need to reduce the execution time of the query.

Solution: You replace line 4 by using the following code:

Does this meet the goal?

Answer : A