Microsoft Developing AI-Enabled Database Solutions DP-800 Exam Questions

You have an Azure SQL database that contains tables named dbo.ProduetDocs and dbo.ProductuocsEnbeddings. dbo.ProductOocs contains product documentation and the following columns:

* Docld (int)

* Title (nvdrchdr(200))

* Body (nvarthar(max))

* LastHodified (datetime2)

The documentation is edited throughout the day. dbo.ProductDocsEabeddings contains the following columns:

* Dotid (int)

* ChunkOrder (int)

* ChunkText (nvarchar(aax))

* Embedding (vector(1536))

The current embedding pipeline runs once per night

Vou need to ensure that embeddings are updated every time the underlying documentation content changes The solution must NOT 'equire a nightly batch process.

What should you include in the solution?

Answer : D

The requirement is to ensure embeddings are updated every time the underlying content changes without relying on a nightly batch job. The right design is to enable change tracking on the source table so an external process can identify which rows changed and regenerate embeddings only for those rows. Microsoft documents that change detection mechanisms are used to pick up new and updated rows incrementally, which is the right pattern when you need near-continuous refresh instead of full nightly rebuilds.

This is better than:

A . fixed-size chunking, which affects chunk strategy but not change detection.

B . a smaller embedding model, which affects model cost/latency but not update triggering.

C . table triggers, which would push embedding-maintenance logic directly into write operations and is generally not the best design for AI-processing pipelines. The question specifically asks for a solution that replaces the nightly batch requirement, not one that performs heavyweight work inline during every transaction.

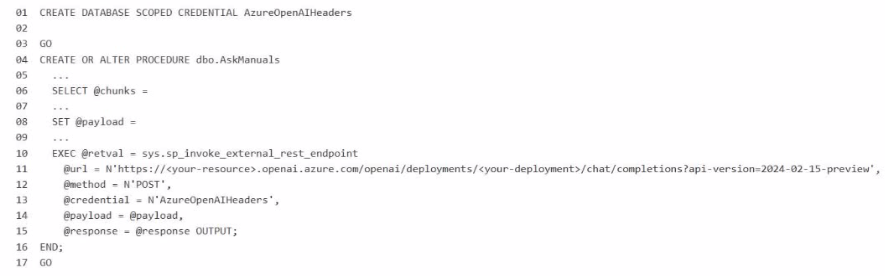

You have a Microsoft SQL Server 2025 instance that has a managed identity enabled.

You have a database that contains a table named dbo.ManualChunks. dbo.ManualChunks contains product manuals.

A retrieval query already returns the top five matching chunks as nvarchar(max) text.

You need to call an Azure OpenAI REST endpoint for chat completions. The solution must provide the highest level of security.

You write the following Transact-SG1 code.

What should you insert at line 02?

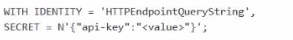

A)

B)

C)

D)

E)

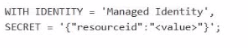

Answer : B

The correct answer is Option B because the requirement is to call an Azure OpenAI REST endpoint from SQL Server 2025 while providing the highest level of security, and the instance already has a managed identity enabled. For Microsoft's SQL AI features, the preferred secure pattern is to use a database scoped credential with IDENTITY = 'Managed Identity' instead of storing an API key. Microsoft documents that SQL Server 2025 supports managed identity for external AI endpoints, and for Azure OpenAI the credential secret uses the Cognitive Services resource identifier: {'resourceid':'https://cognitiveservices.azure.com'}.

So line 02 should be:

WITH IDENTITY = 'Managed Identity', SECRET = '{'resourceid':'https://cognitiveservices.azure.com'}';

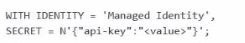

Why the other options are incorrect:

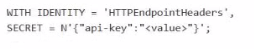

A and D use HTTP header or query-string credentials with an API key, which is less secure than managed identity because a secret key must be stored and rotated manually. Microsoft recommends managed identity where supported to avoid embedded secrets.

C mixes Managed Identity with an api-key secret, which is not the correct pattern for Azure OpenAI managed-identity authentication.

E uses an invalid identity value for this scenario. The accepted credential identities for external REST endpoint calls include HTTPEndpointHeaders, HTTPEndpointQueryString, Managed Identity, and Shared Access Signature.

Because the endpoint is Azure OpenAI and the question explicitly asks for the highest security, managed identity with the Cognitive Services resource ID is the Microsoft-aligned answer.

Vou have an Azure SQL database named SalesDB that contains a table named dbo. Articles, dbo.Articles contains two million articles with embeddmgs. The articles are updated frequently throughout the day.

You query the embeddings by using VECTOR_SEARQi

Users report that semantic search results do NOT reflect the updates until the following day.

Vou need to ensure that the embeddings are updated whenever the articles change. The solution must minimize CPU usage on SalesDB

Which embedding maintenance method should you implement?

Answer : B

The correct answer is B because the problem is not the vector search operator itself. The problem is that embeddings are becoming stale when article content changes. Microsoft documents that change data capture (CDC) tracks insert, update, and delete operations on source tables, which makes it the right mechanism to identify only the rows that changed.

This also best satisfies the requirement to minimize CPU usage on SalesDB. With CDC, the database only records the row changes, and the embedding regeneration work can be moved to an external process such as an Azure Functions app. That avoids running embedding generation inline inside the database for every update and avoids repeatedly recalculating embeddings for unchanged rows. In contrast, an hourly full-table regeneration would be extremely wasteful on a table with two million frequently updated articles, and a trigger that calls embedding generation per row would push expensive AI work into the transactional path of the database.

Option A is incorrect because changing from VECTOR_SEARCH to VECTOR_DISTANCE does not regenerate embeddings; it only changes the retrieval method. Microsoft states that VECTOR_SEARCH is the ANN search function, while VECTOR_DISTANCE performs exact distance calculation, so neither option addresses stale embedding data.

So the right design is:

use CDC to detect only changed articles,

process those changes outside the database,

regenerate embeddings only for changed rows,

write back the refreshed embeddings for current semantic search results.

You have an Azure SQL database that supports the OLTP workload of an order-processing application.

During a 10-minute incident window, you run a dynamic management view query and discover the following:

Session 72 is sleeping with open_transaction_count = 1.

Multiple other sessions show blocking_session_id = 72 in sys.dm_exec_requests.

sys.dm_exec_input_buffer(72, NULL) returns only BEGIN TRANSACTION UPDATE Sales.Orders.

Users report that updates to Sales.Orders intermittently time out during the incident window. The timeouts stop only after you manually terminate session 72.

What is a possible cause of the blocking?

Answer : C

The best explanation is an open explicit transaction. During the incident, session 72 was sleeping but still had open_transaction_count = 1, and sys.dm_exec_input_buffer(72, NULL) showed only BEGIN TRANSACTION UPDATE Sales.Orders. That pattern indicates the session executed an update inside an explicit transaction and then remained idle without committing or rolling back, while still holding locks. Other sessions showing blocking_session_id = 72 is the expected symptom of that situation. Microsoft explains that blocking occurs when one session holds a lock on a resource and another session requests a conflicting lock, and sleeping sessions can continue to block if they retain locks through an open transaction.

This also fits the observed behavior that the timeouts stopped only after session 72 was terminated. Killing the session would roll back the active transaction and release the locks, allowing waiting updates to continue. That is much more consistent with an uncommitted transaction than with a deadlock, because deadlocks are normally detected and one session is chosen as the victim automatically rather than persisting until manual termination.

Vou have a SQL database in Microsoft Fabric that contains a nvarchar(max) column named MessageText. An ID is always contained within the first paragraph of MessageText.

You need to write a Transact SQL query that uses REGEXP_SUBSTR to extract the ID from MessageText.

What should you include in the query?

Answer : B

Microsoft documents REGEXP_SUBSTR for Transact-SQL with the string_expression parameter as supporting character string types char, nchar, varchar, and nvarchar. For the regex functions, support for LOB types such as varchar(max) and nvarchar(max) is specifically called out for REGEXP_LIKE, REGEXP_COUNT, and REGEXP_INSTR up to 2 MB, but that support note is not listed for REGEXP_SUBSTR in the surfaced documentation. In exam terms, the safe and expected approach is to cast the nvarchar(max) column to nvarchar(4000) before calling REGEXP_SUBSTR.

This also fits the scenario detail that the ID is always contained within the first paragraph of MessageText. Since the needed value is near the start of the text, narrowing the input to a non-LOB string type such as nvarchar(4000) is sufficient and avoids incompatibility concerns with nvarchar(max).

The other options are not appropriate:

A STRING_ESCAPE(..., 'json') is for JSON escaping, not regex extraction.

C adding a case-sensitive collation changes comparison behavior, but it is not the required fix for REGEXP_SUBSTR on nvarchar(max).

D TRY_CONVERT(varchar(max), ...) still leaves a MAX type and also risks unnecessary Unicode loss.

You have an Azure SQL database.

You deploy Data API builder (DAB) to Azure Container Apps by using the mcr.nicrosoft.com/azure-databases/data-api-builder:latest image.

You have the following Container Apps secrets:

* MSSQL_COMNECTiON_STRrNG that maps to the SQL connection string

* DAB_C0HFT6_BASE64 that maps to the DAB configuration

You need to initialize the DAB configuration to read the SQL connection string.

Which command should you run?

Answer : B

Data API builder supports reading the database connection string from an environment variable by using the syntax:

@env('MSSQL_CONNECTION_STRING')

Microsoft's DAB documentation explicitly shows that @env('MSSQL_CONNECTION_STRING') tells Data API builder to read the connection string from an environment variable at runtime.

That fits this scenario because Azure Container Apps secrets are typically exposed to the container as environment variables. Microsoft's Azure Container Apps documentation states that environment variables can reference secrets, and DAB's Azure Container Apps deployment guidance shows a secret being mapped into an environment variable that DAB then reads.

Why the other options are wrong:

A and D incorrectly point the connection string to DAB_CONFIG_BASE64, which is the config payload secret, not the SQL connection string.

C uses secretref: syntax inside dab init, but DAB expects the connection string parameter in the config to use the environment-variable reference syntax @env(...). The secretref: pattern is for Azure Container Apps environment variable configuration, not for the DAB CLI connection-string argument itself.

So the correct command is:

dab init --database-type mssql --connection-string '@env('MSSQL_CONNECTION_STRING')' --host-mode Production --config dab-config.json

You have an Azure SQL database that contains a column named Notes.

A security review discovers that Notes contains sensitive data.

You need to ensure that the data is protected so that neither the stored values nor the query inputs reveal information about the actual data. The solution must prevent a user from inferring relationships or repetitions in the data based on the encrypted output

Which should you use?

Answer : B

The requirement says the stored values and query inputs must both be protected, and users must not be able to infer relationships or repetitions in the data from the encrypted output. Microsoft documents that deterministic encryption always produces the same ciphertext for the same plaintext, which allows equality comparisons but also leaks patterns. By contrast, randomized encryption produces a different encrypted value each time for the same plaintext, which improves security and prevents pattern analysis based on repeated ciphertext values.

That makes randomized encryption the right choice here:

It protects data at rest and in transit/query parameters under Always Encrypted's client-side encryption model.

It prevents attackers from learning that the same plaintext value appears repeatedly, because repeated inputs do not produce repeated ciphertext.

Why the other options are wrong:

A . Always Encrypted with secure enclaves adds richer confidential query support, but the key protection property the question is testing is the encryption type. The requirement to prevent inference from repeated ciphertext points specifically to randomized encryption.

C . RLS controls row access, not value confidentiality.

D . Deterministic encryption allows equality-based operations but leaks repetition patterns, which the question explicitly forbids.