NetApp certified support engineer - ONTAP specialist NS0-593 Exam Questions

Your customer mentions that they have accidentally destroyed both root aggregates in their two-node cluster.

In this scenario, what are two actions that must be performed? (Choose two.)

Answer : A, C

If both root aggregates are destroyed in a two-node cluster, the cluster will be inoperable and the data will be inaccessible. To recover from this situation, you need to perform the following actions:

Install ONTAP from a USB device on one of the nodes. This will create a new root aggregate and a new cluster on that node.

Rejoin the second node to the re-created cluster. This will also create a new root aggregate on the second node and synchronize it with the first node.

Restore the cluster configuration and data from a backup, if available.Reference=

ONTAP 9 Documentation Center

Storage System Recovery Troubleshooting

Recovering from a root aggregate failure

A system panic due to an "L2 watchdog timeout hard reset" error occurred. You have found a FIFO message in the SP log.

Which FIFO message Is useful for Investigating this Issue?

Answer : A

= The FIFO message before NMI is useful for investigating the issue because it shows the state of the system before the non-maskable interrupt (NMI) was triggered by the L2 watchdog timeout. The FIFO message contains information about the CPU registers, the stack pointer, the instruction pointer, and the last executed instructions. This can help identify the cause of the system hang or deadlock that led to the watchdog reset. The other FIFO messages are not useful because they show the state of the system after the reset or shutdown, which may not reflect the original problem.Reference=https://kb.netapp.com/onprem/ontap/hardware/Handling_L2_Watchdog_Resets_on_the_FAS8200_and_AFF_A300_platforms

https://docs.netapp.com/us-en/ontap-metrocluster/install-ip/task_sw_config_restore_defaults.html

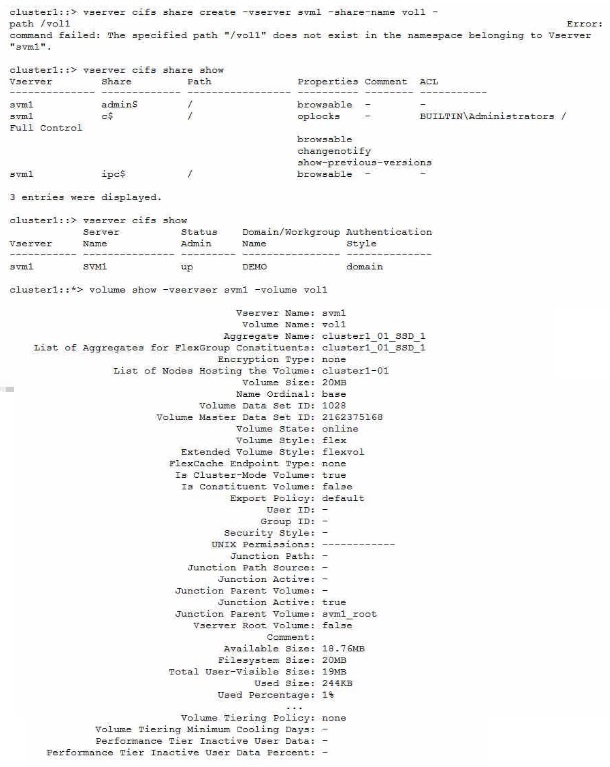

When an administrator tries to create a share for an existing volume named voll, the process fails with an error.

Referring to the exhibit, what Is the reason for the error?

Answer : B

The error message indicates that the specified path ''/vol1'' does not exist in the namespace belonging to Vserver ''svm1''. This means that the volume ''vol1'' has not been mounted to the Vserver's namespace, which is required for creating a share. The volume type, the CIFS service status, and the CIFS service mode are not relevant to the error.Reference=https://www.netapp.com/support-and-training/netapp-learning-services/certifications/support-engineer/

https://mysupport.netapp.com/site/docs-and-kb

A customer's storage administrator Informs you about the deactivated Automatic Switchover (AUSO) feature on their MetroCluster IP environment.

What Information would you tell your customer in this scenario?

Answer : B

The AUSO feature is a MetroCluster functionality that enables an automatic switchover to the surviving site in the event of a disaster that affects one site1.

The AUSO feature is only available in MetroCluster FC configurations, which use Fibre Channel (FC) switches and FC-to-SAS bridges to connect the nodes and disk shelves across sites1.

MetroCluster IP configurations, which use Ethernet switches and network adapters to connect the nodes and disk shelves across sites, do not support the AUSO feature2.

Instead, MetroCluster IP configurations use the ONTAP Mediator service, which is a software component that monitors the health and connectivity of the MetroCluster nodes and initiates a Mediator-assisted unplanned switchover when a disaster occurs3.

The Mediator-assisted unplanned switchover is similar to the AUSO feature, but it requires the ONTAP Mediator service to be configured and running on a separate host3.

Therefore, you would tell your customer that the AUSO feature is not available in MetroCluster IP installations by design, and that they need to use the ONTAP Mediator service instead for disaster recovery.Reference:

1: Understanding MetroCluster data protection and disaster recovery, ONTAP MetroCluster Documentation Center

2: Differences among the ONTAP MetroCluster configurations, ONTAP MetroCluster Documentation Center

3: Configure the ONTAP Mediator service from a MetroCluster IP configuration, ONTAP MetroCluster Documentation Center

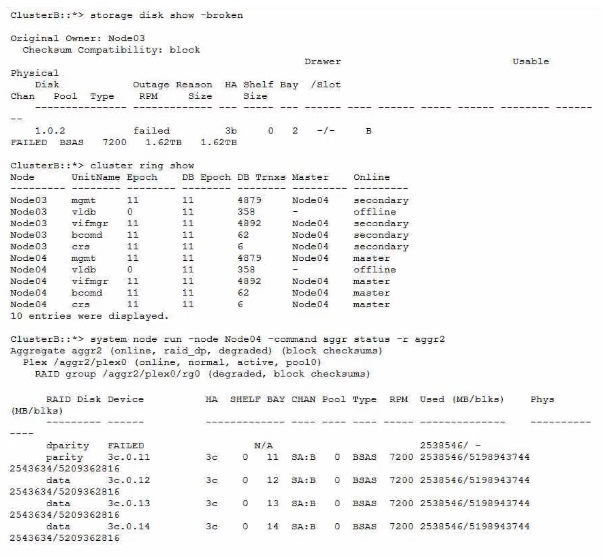

A customer is calling you to troubleshoot why users are unable to connect to their CIFS SVM.

Referring to the Information shown in the exhibit, what Is the source of the problem?

Answer : D

The broken disk in Node03 is causing the cluster ring to be offline, which prevents the CIFS SVM from being accessible. The cluster ring is a distributed database that stores cluster configuration information and enables communication between cluster nodes. If the cluster ring is offline, the cluster cannot function properly and the CIFS SVM cannot serve data to clients. The other options are not relevant to the CIFS SVM connectivity issue.Reference=https://www.netapp.com/support-and-training/netapp-learning-services/certifications/support-engineer/

https://mysupport.netapp.com/site/docs-and-kb

You created a new NetApp ONTAP FlexGroup volume spanning six nodes and 12 aggregates with a total size of 4 TB. You added millions of files to the FlexGroup volume with a flat directory structure totaling 2 TB, and you receive an out of apace error message on your host.

What would cause this error?

Answer : D

The maxdirsize is the maximum size of a directory in a FlexVol or FlexGroup volume. It is determined by the number of inodes allocated to the directory. If the directory contains more files than the maxdirsize can accommodate, then the ONTAP software will return an out of space error message to the host, even if the volume has enough free space.This can happen when a FlexGroup volume has a flat directory structure with millions of files, as the maxdirsize is not automatically adjusted for FlexGroup volumes12.Reference:1: FlexGroup volumes: Frequently asked questions | NetApp Documentation2: How to increase the maxdirsize of a FlexVol volume - NetApp Knowledge Base

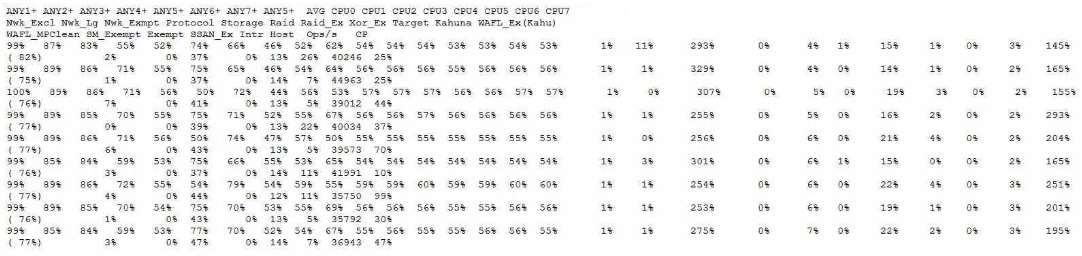

A storage administrator reports that a monitoring toot is reporting that the storage controller reads between 90% to 93% CPU use. You run the sysstat -m command against the node in question.

Referring to the exhibit, which statement is correct?

Answer : D

= CPU utilization in ONTAP is not a linear measure of the system load, nor can it be used alone as a measure of the overall system utilization. ONTAP uses a Coarse Symmetric Multiprocessing (CSMP) design which partitions system functions into logical processing domains, each with its own scheduling rules and resource availability. Therefore, a high CPU utilization does not necessarily indicate a performance problem, unless it is accompanied by other contributing factors such as high latency, low throughput, or high queue depth. ONTAP has several mechanisms to optimize CPU usage and balance the workload across the cores, such as WAFL parallelization, exempt processing, and CPU pinning. The CPU utilization reported by the sysstat command is an average across all cores and domains, and does not reflect the actual CPU activity or availability for each domain. Therefore, the CPU is not a first-order monitoring metric for ONTAP, and other metrics such as latency, throughput, and queue depth should be considered first.Reference=What is CPU utilization in Data ONTAP: Scheduling and Monitoring?,How to measure CPU utilization,What are CPU as a compute resource and the CPU domains in ONTAP 9?,Monitoring CPU utilization before ONTAP upgrade