Qlik Sense Data Architect Certification Exam - 2024 QSDA2024 Exam Questions

A data architect receives an error while running script.

What will happen to the existing data model?

Answer : B

In Qlik Sense, when a data load script is executed and an error occurs, the script execution is halted immediately, and any tables that were being loaded at the time of the error are discarded. However, the existing data model---i.e., the last successfully loaded data model---remains intact and is not affected by the failed script. This ensures that the application retains the last known good state of the data, avoiding any partial or inconsistent data loads that could occur due to an error.

When the script encounters an error:

The tables that were successfully loaded prior to the error are retained in the session, but these tables are not merged with the existing data model.

The existing data model before the script was executed remains unchanged and is maintained.

No partial or incomplete data is loaded into the application; hence, the data model remains consistent and reliable.

Qlik Sense Data Architect Reference This behavior is designed to protect the integrity of the data model. In scenarios where script execution fails, the user can debug and fix the script without risking the data integrity of the existing application. The key references include:

Qlik Help Documentation: Provides detailed information on how Qlik Sense handles script errors, highlighting that the existing data model remains unchanged after an error.

Data Load Editor Practices: Best practices dictate ensuring that the script is fully functional before executing it to avoid data inconsistency. In cases where an error occurs, understanding that the current data model is maintained helps in strategic debugging and script correction.

The data architect has been tasked with building a sales reporting application.

* Part way through the year, the company realigned the sales territories

* Sales reps need to track both their overall performance, and their performance in their current territory

* Regional managers need to track performance for their region based on the date of the sale transaction

* There is a data table from HR that contains the Sales Rep ID, the manager, the region, and the start and end dates for that assignment

* Sales transactions have the salesperson in them, but not the manager or region.

What is the first step the data architect should take to build this data model to accurately reflect performance?

Answer : C

In the provided scenario, the sales territories were realigned during the year, and it is necessary to track performance based on the date of the sale and the salesperson's assignment during that period. The IntervalMatch function is the best approach to create a time-based relationship between the sales transactions and the sales territory assignments.

IntervalMatch: This function is used to match discrete values (e.g., transaction dates) with intervals (e.g., start and end dates for sales territory assignments). By matching the transaction dates with the intervals in the HR table, you can accurately determine which territory and manager were in effect at the time of each sale.

Using IntervalMatch, you can generate point-in-time data that accurately reflects the dynamic nature of sales territory assignments, allowing both sales reps and regional managers to track performance over time.

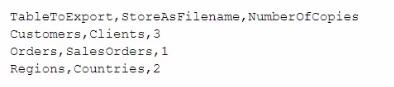

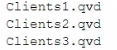

A data architect needs to develop a script to export tables from a model based upon rules from an independent file. The structure of the text file with the export rules is as follows:

These rules govern which table in the model to export, what the target root filename should be, and the number of copies to export.

The TableToExport values are already verified to exist in the model.

In addition, the format will always be QVD, and the copies will be incrementally numbered.

For example, the Customers table would be exported as:

What is the minimum set of scripting strategies the data architect must use?

Answer : A

In the provided scenario, the goal is to export tables from a Qlik Sense model based on rules specified in an external text file. The structure of the text file indicates which table to export, the filename to use, and how many copies to create.

Given this structure, the data architect needs to:

Loop through each row in the text file to process each table.

Use an IF statement to check whether the specified table exists in the model (though it's mentioned they are verified to exist, this step may involve conditional logic to ensure the rules are correctly followed).

Use another IF statement to handle the creation of multiple copies, ensuring each file is named incrementally (e.g., Clients1.qvd, Clients2.qvd, etc.).

Key Script Strategies:

Loop: A loop is necessary to iterate through each row of the text file to process the tables specified for export.

IF Statements: The first IF statement checks conditions such as whether the table should be exported (based on additional logic if needed). The second IF statement handles the creation of multiple copies by incrementing the filename.

This approach covers all the necessary logic with the minimum set of scripting strategies, ensuring that each table is exported according to the rules defined.

A data architect needs to load large amounts of data from a database that is continuously updated.

* New records are added, and existing records get updated and deleted.

* Each record has a LastModified field.

* All existing records are exported into a QVD file.

* The data architect wants to load the records into Qlik Sense efficiently.

Which steps should the data architect take to meet these requirements?

Answer : D

When dealing with a database that is continuously updated with new records, updates, and deletions, an efficient data load strategy is necessary to minimize the load time and keep the Qlik Sense data model up-to-date.

Explanation of Steps:

Load the existing data from the QVD:

This step retrieves the already loaded and processed data from a previous session. It acts as a base to which new or updated records will be added.

Load new and updated data from the database. Concatenate with the table loaded from the QVD:

The next step is to load only the new and updated records from the database. This minimizes the amount of data being loaded and focuses on just the changes.

The new and updated records are then concatenated with the existing data from the QVD, creating a combined dataset that includes all relevant information.

Create a separate table for the deleted rows and use a WHERE NOT EXISTS to remove these records:

A separate table is created to handle deletions. The WHERE NOT EXISTS clause is used to identify and remove records from the combined dataset that have been deleted in the source database.

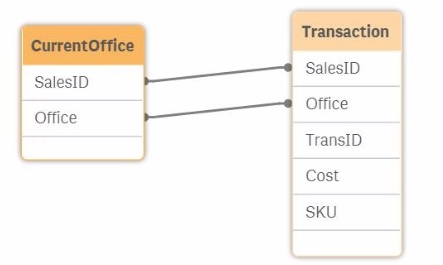

Exhibit

Refer to the exhibit.

The salesperson ID and the office to which the salesperson belongs is stored for each transaction. The data model also contains the current office for the salesperson. The current office of the salesperson and the office the salesperson was in when the transaction occurred must be visible. The current source table view of the model is shown. A data architect must resolve the synthetic key.

How should the data architect proceed?

Answer : C

In the provided data model, both the CurrentOffice and Transaction tables contain the fields SalesID and Office. This leads to the creation of a synthetic key in Qlik Sense because of the two common fields between the two tables. A synthetic key is created automatically by Qlik Sense when two or more tables have two or more fields in common. While synthetic keys can be useful in some scenarios, they often lead to unwanted and unexpected results, so it's generally advisable to resolve them.

In this case, the goal is to have both the current office of the salesperson and the office where the transaction occurred visible in the data model. Here's how each option compares:

Option A: Comment out the Office in the Transaction table: This would remove the Office field from the Transaction table, which would prevent you from seeing which office the salesperson was in when the transaction occurred. This option does not meet the requirement.

Option B: Inner Join the Transaction table to the CurrentOffice table: Performing an inner join would merge the two tables based on the common SalesID and Office fields. However, this might result in a loss of data if there are sales records in the Transaction table that don't have a corresponding record in the CurrentOffice table or vice versa. This approach might also lead to unexpected results in your analysis.

Option C: Alias Office to CurrentOffice In the CurrentOffice table: By renaming the Office field in the CurrentOffice table to CurrentOffice, you prevent the synthetic key from being created. This allows you to differentiate between the salesperson's current office and the office where the transaction occurred. This approach maintains the integrity of your data and allows for clear analysis.

Option D: Force concatenation between the tables: Forcing concatenation would combine the rows of both tables into a single table. This would not solve the issue of distinguishing between the current office and the office at the time of the transaction, and it could lead to incorrect data associations.

Given these considerations, the best approach to resolve the synthetic key while fulfilling the requirement of having both the current office and the office at the time of the transaction visible is to Alias Office to CurrentOffice in the CurrentOffice table. This ensures that the data model will accurately represent both pieces of information without causing synthetic key issues.

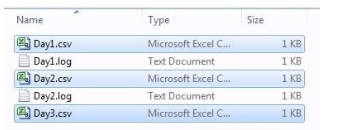

Refer to the exhibit.

A system creates log files and csv files daily and places these files in a folder. The log files are named automatically by the source system and change regularly. All csv files must be loaded into Qlik Sense for analysis.

Which method should be used to meet the requirements?

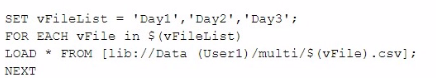

A)

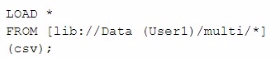

B)

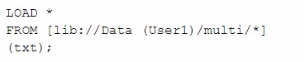

C)

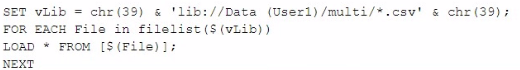

D)

Answer : B

In the scenario described, the goal is to load all CSV files from a directory into Qlik Sense, while ignoring the log files that are also present in the same directory. The correct approach should allow for dynamic file loading without needing to manually specify each file name, especially since the log files change regularly.

Here's why Option B is the correct choice:

Option A: This method involves manually specifying a list of files (Day1, Day2, Day3) and then iterating through them to load each one. While this method would work, it requires knowing the exact file names in advance, which is not practical given that new files are added regularly. Also, it doesn't handle dynamic file name changes or new files added to the folder automatically.

Option B: This approach uses a wildcard (*) in the file path, which tells Qlik Sense to load all files matching the pattern (in this case, all CSV files in the directory). Since the csv file extension is explicitly specified, only the CSV files will be loaded, and the log files will be ignored. This method is efficient and handles the dynamic nature of the file names without needing manual updates to the script.

Option C: This option is similar to Option B but targets text files (txt) instead of CSV files. Since the requirement is to load CSV files, this option would not meet the needs.

Option D: This option uses a more complex approach with filelist() and a loop, which could work, but it's more complex than necessary. Option B achieves the same result more simply and directly.

Therefore, Option B is the most efficient and straightforward solution, dynamically loading all CSV files from the specified directory while ignoring the log files, as required.

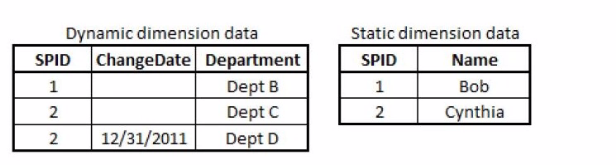

Exhibit.

A large electronics company re-assigns sales people once per year from one Department to another.

SPID is the Salesperson ID; the SPID for each individual sales person Name remains constant. The Department for a SPID may change; each change is stored in the Dynamic Dimension data.

Four tables need to be linked correctly: a transaction table, a dynamic salesperson dimension, a static salesperson dimension, and a department dimension.

Which script prefix should the data architect use?

Answer : B

In the scenario described, the Dynamic Dimension data tracks changes in department assignments for salespeople over time. To correctly link the transaction data with the salesperson data and ensure that sales are associated with the correct department based on the date, an IntervalMatch function should be used.

IntervalMatch is designed to match discrete data (like transaction dates) with a range of dates. In this case, each salesperson's department assignment is valid over a period of time, and the IntervalMatch function can be used to link the transaction data with the correct department for each salesperson based on the transaction date.

Option A (Merge): This option is incorrect as it refers to combining data sets, which doesn't address the need to handle the dynamic, date-based department assignments.

Option B (IntervalMatch): This is the correct choice because it allows you to match each transaction with the correct department assignment based on the ChangeDate in the Dynamic Dimension data.

Option C (Partial Reload): This refers to reloading only part of the data, which is not relevant to linking tables based on date ranges.

Option D (Semantic): This option is not applicable as it refers to a broader approach to data modeling and interpretation rather than specifically linking data based on time intervals.

Thus, IntervalMatch is the correct method for linking the transaction data with the dynamic salesperson dimension, ensuring that each transaction is associated with the correct department based on the historical assignment data.