Salesforce Certified Tableau Consultant Analytics-Con-301 Exam Questions

During a Tableau Cloud implementation, a Tableau consultant has been tasked with implementing row-level security (RLS). They have already invested in implementing RLS within their own database for their legacy reporting solution. The client wants to know if they will be able to leverage their existing RLS after the Tableau Cloud implementation.

Which two requirements should the Tableau consultant share with the client? Choose two.

Answer : A, C

Comprehensive and Detailed Explanation From Exact Extract:

In Tableau Cloud, database-level RLS can be used only with live connections because:

Tableau Cloud issues SQL queries using the logged-in user's identity.

Extracts break RLS because data is pulled out of the database and stored in Tableau's hyper file.

To leverage existing RLS rules, Tableau must query the database directly for the user.

Therefore:

Requirement 1:

The Tableau Cloud username (email) must exist in the database

so that the database can enforce RLS using the authenticated identity.

Requirement 2:

Only live data connections support database-native RLS.

Extracts bypass database security and therefore cannot use RLS defined in the database.

Option D is incorrect because RLS is enforced in the database, not configured in Tableau Cloud.

Option B is incorrect because extracts cannot use database RLS.

Thus, correct answers are A and C.

Tableau Cloud live connection security requirements.

Database RLS documentation requiring matching database user identities.

Explanation that extracts bypass database permission systems.

A client's fiscal calendar runs from February 1 through January 31.

How should the consultant configure Tableau to use the client's fiscal calendar when building date charts?

Answer : D

Comprehensive and Detailed Explanation From Exact Extract:

Tableau allows fiscal calendars to be defined at the data source level, affecting how all date fields behave across the workbook.

According to Tableau documentation:

Fiscal calendars must be set using Data Source Date Properties.

Once set, this becomes a default property for all date fields unless overridden.

This allows charts, hierarchies, and date parts to automatically follow the fiscal year starting in February.

Correct procedure:

Go to Data Source.

Open Date Properties.

Set Fiscal Year Start = February.

(Optional) Adjust Date Field Default Properties.

This ensures all charts and date hierarchies use the fiscal calendar automatically.

Why the other options are incorrect:

A . Right-click on axis Use Fiscal Calendar

This option does not exist in Tableau.

B . Set Fiscal Year in Data Preview

Not supported; fiscal configuration isn't made in the preview window.

C . Use FISCALYEAR() / FISCALQUARTER()

This is manual, requires custom fields, and does not configure Tableau's built-in fiscal calendar system.

This is more work and not the correct method.

Only Option D configures the fiscal calendar globally and correctly.

Tableau Date Properties documentation specifying fiscal year settings.

Default Properties configuration for date fields.

Official guidance for implementing fiscal calendars.

A client has a Tableau Cloud deployment. Currently, dashboards are available only to internal users.

The client needs to embed interactive Tableau visualizations on their public website.

Data is < 5,000 rows, updated infrequently via manual refresh.

Cost is a priority.

Which product should the client use?

Answer : B

Comprehensive and Detailed Explanation From Exact Extract:

Tableau documentation explains:

Tableau Public

Free platform.

Allows public sharing and embedding of fully interactive dashboards.

Ideal for small datasets and infrequent updates.

Does not require user-based licensing.

Embedding is unrestricted because all content is publicly visible.

This perfectly matches the scenario:

Public-facing website

Low cost priority

Small dataset

Manual, infrequent updates

Why the other options are incorrect:

A . Tableau Cloud (per user)

Requires paid licenses.

Does not allow unrestricted public embedding without expensive add-ons.

Designed for secure internal use, not public web-wide embedding.

C . Tableau Embedded Analytics

A paid embedding solution requiring proper licensing.

Designed for large-scale, secure, programmatic embedding --- too costly for this use case.

D . Tableau Server (per core)

Requires server infrastructure & licensing.

Far more expensive than Tableau Public.

Thus, Tableau Public is the correct, cost-effective solution.

Tableau Public documentation describing free embedding for public websites.

Comparison guides showing Tableau Cloud/Server require licensing for embedding.

Public vs. Enterprise Tableau deployment best practices.

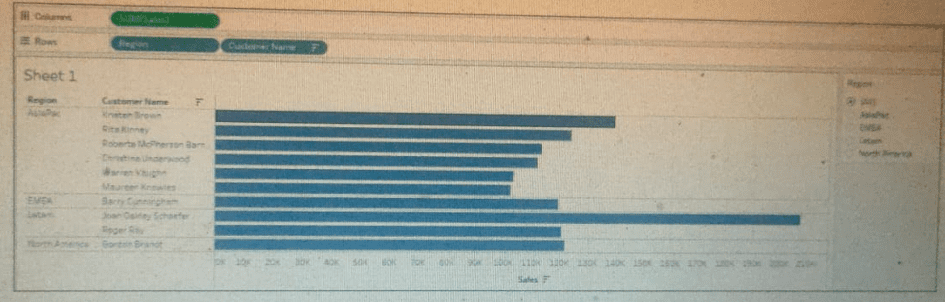

A business analyst is creating a view of the top 10 customers for each region. The analyst has set a "Top 10" filter on Customer Name. However, it did not display the top 10 customers per region, as shown in the image below.

Which type of filter should the business analyst add to filter for region?

Answer : D

The issue occurs because of Tableau's Order of Operations.

Key Tableau logic:

Top N filters are a type of Dimension filter.

Dimension filters are evaluated after Context filters.

When you place Region on Filters (as a standard dimension filter), Tableau:

First applies the Customer Name Top 10 filter across the entire data set, not per region.

Then limits the view to the selected region(s).

This results in seeing the global Top 10 customers, not the Top 10 per region.

How to fix it:

To force Tableau to compute Top 10 customers within each region, the Region filter must be applied before the Top N Customer filter.

This is done by making Region a Context Filter.

Effect of a Context Filter:

Context filters are executed before the Top N filter.

Region becomes the context.

Tableau then evaluates the Top 10 customers inside each region's subset of data.

This produces the correct ''Top 10 customers per region''.

Why the other options are incorrect:

A . Extract filter

Applies once when creating the extract; does not control Top N logic inside the workbook.

B . Dimension filter

This is what the analyst already has --- and it causes the unwanted behavior because it happens after the Top N filter.

C . Table Calculation filter

Top N is not a table calculation; table calc filters cannot fix this problem.

Only the Context Filter changes the execution order so Top N works per region.

Tableau Order of Operations showing Context Filters applied before Top N filters.

Best practices recommending Context Filters when Top N must be computed within subcategories.

Filtering documentation explaining that Top N filters require context when additional dimensional filters are present.

A company's Tableau Cloud admin wants to maintain control over what content gets published to its site for viewers, while also supporting self-service for dashboard creators.

Which governance strategy should the admin implement?

Answer : A

Comprehensive and Detailed Explanation From Exact Extract:

Tableau's recommended content governance model for Server and Cloud emphasizes project-based separation between development (''sandbox'') content and certified, production-ready content.

Key points from Tableau governance guidance:

Organizations should define sandbox projects where creators can freely publish and iterate on workbooks and data sources.

Once content is reviewed and validated, it is promoted into ''production'' projects that are designated for trusted content for viewers.

This model allows self-service authoring while keeping tight control over what is exposed to broad viewer audiences.

Option A exactly reflects this model: sandbox projects for ad hoc content, and production projects for validated content.

Option B uses separate sites and the Content Migration Tool, which is heavier to manage and usually reserved for cross-environment moves (such as dev to prod), not necessary for basic project-level governance in a single Tableau Cloud site.

Option C relies on Personal Space. Tableau recommends Personal Space for private drafts, not as the main promotion path, and it is not the primary governance pattern for viewer-facing content.

Option D restricts data source viewing but does not provide a full governance strategy for managing ad hoc versus production dashboards.

Therefore, the correct strategy is sandbox projects plus production projects, which is option A.

Tableau governance whitepapers describing sandbox versus production projects as a best-practice pattern.

Tableau Cloud site administration guidance recommending project structure for self-service and controlled promotion of content.

A consultant has a view using a table calculation to calculate percent of total Sales by Category. The consultant would like to filter out particular categories, but wants the percent of total calculation to remain steady even as they filter items in or out.

What should the consultant do to achieve the desired impact?

Answer : D

Comprehensive and Detailed Explanation From Exact Extract:

The key detail of the question:

''filter out particular categories, but wants the percent of total calculation to remain steady even as they filter items in or out.''

This means the percent of total must ignore filters.

Table calculations always operate after filters, except table calc filters like 'Filter on Table Calculation,' and after dimension filters, so filtering categories directly will change the denominator.

Tableau's documented solution for ''percent of total that does not change with filtering'' is:

Use a FIXED LOD to define the stable denominator

A FIXED LOD expression ''freezes'' the aggregation level and is unaffected by dimension filters unless explicitly added to context.

This allows the consultant to compute:

{ FIXED : SUM([Sales]) }

or

{ FIXED [Category] : SUM([Sales]) }

Then percent of total becomes:

SUM([Sales]) / { FIXED : SUM([Sales]) }

The FIXED LOD stores the total before filters are applied, ensuring the percent remains steady.

This is exactly what Tableau documentation explains under:

Level of Detail Expressions

LODs and Order of Operations

Using LODs to create filter-independent calculations

Thus, D is correct.

Why the other answers are wrong:

A. Context Filter

Context filters run before FIXED LODs but after raw data.

If Category is put into context, LOD totals would be reduced.

Table calculation totals still change because table calcs run near the bottom of the pipeline.

B. Data Source Filter

Data source filters remove rows before all table calculations and LODs.

This would make the percent of total incorrect, because filtered-out categories would physically be gone.

C. Aggregate Expression

An aggregate field alone does not solve the issue because it still respects dimension filters.

A consultant used Tableau Data Catalog to determine which workbooks will be affected by a field change.

Catalog shows:

Published Data Source 7 connected workbooks

Field search (Lineage tab) 6 impacted workbooks

The client asks: Why 7 connected, but only 6 impacted?

Answer : C

Comprehensive and Detailed Explanation From Exact Extract:

Key Tableau Catalog behaviors:

Connected workbooks = any workbook linked to the published data source.

Impacted workbooks = only workbooks that use the specific field.

If a workbook connects to the data source but never uses the field, it appears as ''connected'' but not impacted.

This explains EXACTLY why:

7 workbooks are connected

Only 6 use the changed field

Therefore only 6 are impacted

This matches Option C.

Why the other options are incorrect:

A . Field used twice

Still counts as one workbook --- does not explain discrepancy.

B . Permission issue

If permissions blocked visibility, the data source would not list 7 connections.

D . Custom SQL use

Catalog can still detect field usage through metadata lineage; Custom SQL does NOT hide workbook dependency.

Thus, only Option C logically explains the scenario.

Data Catalog lineage rules: ''Connected vs. Impacted'' distinction.

Field-level impact analysis documentation.

Workbook dependency logic within Tableau Catalog.