Salesforce Certified Platform Integration Architect Plat-Arch-204 Exam Questions

A customer is evaluating the Platform Events solution and would like help in comparing/contrasting it with Outbound Messaging for real-time/near-real time needs. They expect 3,000 customers to view messages in Salesforce. What should be evaluated and highlighted when deciding between the solutions?

Answer : C

A customer of Salesforce has used Platform Events to integrate their Salesforce instance with an external third-party artificial intelligence (AI) system. The AI system provides a prediction score for each lead that is received by Salesforce. Once the prediction score is received, the lead information is saved to Platform Events for other processes. The trigger on the Platform Events has failed ever since it was rolled out to production. Which type of monitoring should the integration consultant have considered to monitor this integration?

Answer : A

Troubleshooting failures in Platform Event-triggered logic is challenging because these triggers execute under the 'Automated Process' system user, making them invisible to standard user-level monitoring. To diagnose why a trigger is failing in production, an Integration Architect must set up debug logs specifically for that trigger or the automated process user.

Debug logs provide a granular view into the execution execution path, including Apex errors, governor limit consumption, and specific DML failures. Without these logs, it is impossible to determine if the failure is due to a null pointer exception, a validation rule violation, or a record locking conflict.678

Option B is a design-time validation step; while important, it would not help mon9itor or troubleshoot a runtime failure in a deployed trigger. Option C focuses on high-level consumption limits; while reaching the 'Created Per Hour' limit would prevent events from1011 being published, it would not explain why an existing trigger is failing once the event has already arrived in the bus. By proactively establishing debug logs for the integration's triggers, the consultant can pinpoint the exact line of code or system constraint causing the failure, ensuring a faster 'Mean Time to Repair' (MTTR) for critical AI-driven business processes.

Northern Trail Outfitters is planning to perform nightly batch loads into Salesforce using the Bulk API. The CIO wants monitoring recommendations for these jobs. Which recommendation should help meet the requirements?

Answer : A

For monitoring high-volume Bulk API jobs, the standard and most efficient architectural recommendation is to use the native Bulk Data Load Jobs page in Salesforce Setup.

This page provides a comprehensive, out-of-the-box view of all asynchronous API jobs, including their status (Queued, In Progress, Completed, Failed), the number of records processed, and any overall job errors. It allows administrators to download the result files for each batch to see record-level successes and failures without the overhead of custom code or data storage.

Option B is generally discouraged for high-volume nightly loads. Since the Bulk API is designed to bypass standard synchronous logic for performance, writing errors to a custom object for millions of records would consume significant data storage and could trigger additional governor limit issues during the load itself. Option C is ineffective for Bulk API monitoring; debug logs capture Apex execution but do not monitor the background processing of asynchronous Bulk API batches, and they would quickly become overwhelmed by the volume of data. For enterprise-grade monitoring9, the native UI provides the necessary visibility into job health with zero impact on platform performance or storage.

A business requires automating the check and updating of the phone number type classification for all incoming calls. Up to 100,000 calls per day are received, and the business is flexible with timing (every 6-12 hours is sufficient). A Remote-Call-In and/or Batch Synchronization pattern via middleware is planned. Which component should an integration architect recommend to implement these patterns?

Answer : C

In a Remote-Call-In or Batch Synchronization pattern where an external middleware initiates the connection to Salesforce, the external system must be authenticated. The standard Salesforce framework for this is the Connected App.

A Connected App provides a framework for external applications to integrate with Salesforce using APIs and standard protocols like OAuth 2.0. For a high-volume (100,000 records) batch process initiated by middleware every 6-12 hours, the architect would recommend a Connected App using a secure flow, such as the OAuth 2.0 JWT Bearer Flow. This allows the middleware to authenticate as a specific 'Integration User' without storing clear-text passwords.

Option A (API Gateway) is used to manage and secure outbound calls from Salesforce or between external services; it does not handle inbound authentication to the Salesforce org itself. Option B (Remote Site Settings) is a whitelist configuration used purely to allow Salesforce to make outbound callouts to a specific URL; it provides no authentication mechanism for systems calling into Salesforce. By recommending a Connected App, the architect ensures that the middleware has the necessary permissions (scopes) to use the Bulk API---which is the appropriate mechanism for the 100,000 record volume---while maintaining a secure, auditable, and standard integration boundary.

A company has an external system that processes and tracks orders. Sales reps manage their leads and opportunity pipeline in Salesforce. The company decided to integrate Salesforce and the Order Management System (OMS) with minimal customization and code. Sales reps need to see order history in real-time. The legacy system is on-premise and connected to an ESB. There are 1,000 reps creating 15 orders each per shift, mostly with 20-30 line items. How should an integration architect integrate the two systems based on these requirements?

Answer : C

To meet the requirements of minimal customization, low developer resources, and real-time visibility without data replication, the architect should utilize Salesforce Connect with External Objects and an OData connector.

Salesforce External Objects allow the OMS data to be viewed within Salesforce as if it were stored natively, but the data remains in the on-premise system. This fulfills the requirement for sales reps to see 'up-to-date information' because every time they view the record, Salesforce Connect fetches the latest data via the ESB's OData endpoint. This Data Virtualization pattern is the most efficient choice for real-time history where users only need to view the data occasionally.

Options A and B involve Data Replication via ETL, which would store the order data inside Salesforce. Given the volume (15,000 orders/shift with 25 line items each = 375,000 records daily), this would rapidly consume Salesforce data storage limits and require significant custom development for the ETL logic and REST APIs. Furthermore, ETL is typically batch-oriented and would not provide the true 'real-time' view requested. By using an OData connector, the architect leverages a declarative, 'no-code' solution that satisfies the timeline constraints and provides immediate access to order details and line items without the cost of data storage.

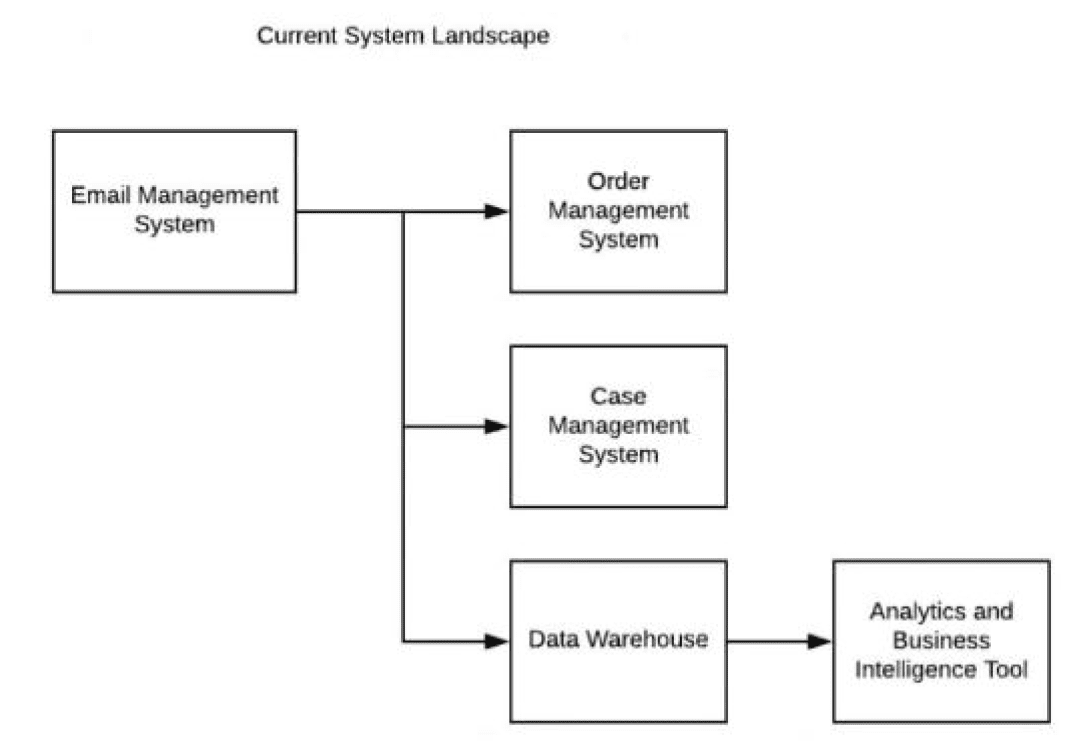

An enterprise customer is planning to implement Salesforce to support case management.

Below is their current system landscape diagram. Considering Salesforce capabilities, what should the integration architect evaluate when integrating Salesforce with the current system landscape?

Answer : A

An Integration Architect's primary responsibility when evaluating a landscape for a new Salesforce implementation is to identify the system of record for each business process and determine which legacy systems will be replaced by Salesforce. In this scenario, the customer is implementing Salesforce specifically to support case management.

According to the provided landscape diagram, the Case Management System currently exists as a standalone entity. Since Salesforce Service Cloud provides native, best-in-class case management capabilities, this legacy system is the primary candidate for retirement. Retiring the legacy Case Management system avoids data fragmentation and ensures that Salesforce serves as the single source of truth for support interactions.

However, for Salesforce to function effectively as a new case management hub, it must integrate with the remaining surrounding systems:

Email Management System: This system likely handles inbound customer communications. An architect must evaluate integrating this with Salesforce (via Email-to-Case or a specialized connector) so that incoming emails automatically generate or update cases.

Order Management System (OMS): Support agents often need to view order history or status to resolve customer inquiries. Integrating Salesforce with the OMS allows for a 360-degree view, enabling agents to see relevant order data directly within the Salesforce case console.

Data Warehouse: For long-term reporting, trend analysis, and a unified customer profile, case data from Salesforce needs to be pushed to the Data Warehouse. This ensures that the Analytics and Business Intelligence Tool downstream can report on support metrics alongside other enterprise data.

Therefore, the architect should evaluate integrations with the Data Warehouse, Order Management, and Email Management System. Option B and C are incorrect because they suggest integrating with the 'Case Management System,' which is the very system being superseded by Salesforce's native capabilities. By focusing on the integration of these three supporting systems, the architect ensures a seamless transition where Salesforce is fully enriched with the necessary external data to drive support excellence.

A company uses Customer Community for course registration. The payment gateway takes more than 30 seconds to process transactions. Students want results in real time to retry if errors occur. What is the recommended integration approach?

Answer : C

Standard synchronous Apex callouts have a timeout limit, and more importantly, Salesforce limits the number of long-running requests (those lasting longer than 5 seconds) that can execute concurrently. If a payment gateway consistently takes 30 seconds, a few simultaneous users could easily exhaust the org's concurrent request limit, causing the entire system to stop responding.

The Continuation pattern (Option C) is designed specifically for this 'long-wait' scenario. It allows the Apex request to be suspended while waiting for the external service to respond, freeing up the Salesforce worker thread to handle other users. Once the gateway responds, the suspended process resumes and returns the result to the student's browser. This provides the 'real-time' experience required for the student to retry immediately without the risk of bringing down the entire community due to thread exhaustion.