VMware Cloud Foundation 9.0 Support 2V0-15.25 Exam Questions

A user wishes to publish a VMware Cloud Foundation (VCF) Operations Orchestrator workflow to their VCF Automation project catalog, but Is blocked from publishing any workflows.

The following information has been provided:

* In the VCF Automation Organization portal, the user cannot see the Workflows option under Content Hub.

* The organization is not a Provider Consumption Organization.

Which are the two likely causes of this issue? (Choose two.)

Answer : A, D

In VMware Cloud Foundation 9.0, publishing a VCF Operations Orchestrator workflow to a VCF Automation project catalog requires that the Organization has a valid integration with VCF Operations Orchestrator. The question states that the user cannot see the Workflows option under Content Hub, and the organization is not a Provider Consumption Organization (PCO). According to the VCF 9.0 documentation, only organizations with VCF Operations Orchestrator integration are allowed to publish workflows into the catalog. Both embedded and external orchestrator integrations must be configured depending on the environment. If no orchestrator (embedded or external) is integrated with the organization, workflows cannot be listed or published. This aligns with the documented VCF Automation and VCF Operations Orchestrator design requirements, which specify that workflow publishing is only available when the orchestrator instance is properly registered.

Additionally, user role permission issues could prevent workflow visibility, but the key blockers described in the scenario are the missing workflow section and the organization type. Because the organization is not a PCO, advanced provider features---including workflow publishing---are disabled unless a proper orchestrator integration exists. Therefore, the two most likely causes are:

A: An external VCF Operations Orchestrator is not integrated with their Organization.

D: An embedded VCF Operations Orchestrator is not integrated with their Organization.

These two conditions directly match the documented behavior in VMware Cloud Foundation 9.0.

An administrator is adding a vSphere Supervisor using VMware NSX classic to an existing VMware Cloud Foundation (VCF) cluster using Distributed Connectivity. When attempting to enable the vSphere Supervisor for the domain the cluster shows up as incompatible with the reason:

No valid edge cluster for VDS 50 Ob 4d 9a cb 32 62 4d - 76 78 6b 92 cd 87 c4 5a

Why is the cluster showing up as incompatible?

Answer : A

A Comprehensive and Detailed Explanation: When enabling vSphere Supervisor with NSX Classic (using the traditional NSX-T Data Center networking stack rather than the newer NSX VPC mode), the vSphere Workload Management wizard filters the list of available NSX Edge Clusters to ensure they are explicitly designated for use with Kubernetes workloads.

The 'WCPReady' Tag Requirement: The primary mechanism vCenter uses to identify a valid, compatible Edge Cluster for Workload Management is a specific tag on the NSX Edge Cluster object. This tag must be WCPReady (case-sensitive).

Symptoms: If this tag is missing---which often happens if the Edge Cluster was created manually in NSX Manager rather than through the SDDC Manager automation---the validation process will fail to find any usable clusters. This results in the specific error message: 'No valid edge cluster for VDS [UUID]', or simply an empty list of compatible clusters in the wizard.

Resolution: The administrator must log in to the NSX Manager, navigate to System > Fabric > Nodes > Edge Clusters, select the target cluster, and manually add the tag WCPReady (often with the scope 'Created for', though the tag itself is the critical filter).

Why other options are incorrect:

B: Large Edge nodes are actually a requirement for vSphere Supervisor (Small/Medium are typically unsupported for this role), so deploying them as Large would make the cluster compatible, not incompatible.

C: vSphere Supervisor fully supports Distributed Connectivity (connecting directly to the VDS), so Central Connectivity is not a hard requirement causing this specific error.

D: While AVI (NSX Advanced Load Balancer) is a supported load balancer, the 'No valid edge cluster' error occurs during the Edge Cluster discovery phase, preceding the load balancer configuration.

An administrator created a new VPC with an associated subnet, configured with a DHCP Server.

When attaching virtual machines to the VPC subnet, an IP address is assigned, but the DNS and NTP settings are not configured.

How can the administrator update the DHCP server configuration to set DNS and NTP?

Answer : A

In VMware Cloud Foundation 9.0 Automation, each VPC is governed by a VPC Service Profile, which defines the default network services applied to the VPC's DHCP server---this includes DNS servers, NTP servers, DHCP lease values, and other network attributes. When a subnet is associated with a VPC and DHCP is enabled, the DHCP service inherits its DNS and NTP configuration from the VPC Service Profile.

In the scenario, virtual machines attached to the new VPC subnet receive an IP address, but not DNS or NTP settings. This indicates that the DHCP server is functioning correctly, but its service profile lacks DNS and NTP configuration. Updating the default VPC Service Profile allows the administrator to specify DNS resolver addresses and NTP time sources, which will then automatically be pushed to all DHCP-enabled subnets under that VPC.

Option B (changing to DHCP Relay) is incorrect because relay mode does not configure DNS/NTP---it delegates DHCP to an external DHCP server. Option C (enable DNS/NTP passthrough) is not a feature of NSX DHCP. Option D (changing connectivity mode) affects routing and service placement, not DHCP options.

An administrator is responsible for supporting a VMware Cloud Foundation (VCF) fleet and has been tasked with deploying VMware Cloud Foundation (VCF) Operations for Logs. To complete this task, the administrator needs to configure a new offline depot within VCF Operations fleet management.

The following information has been provided to the administrator to complete the task:

* Offline Depot Type: Webserver

* Repository URL: http://10.138.148.160/depot/

* Username: depotuser

* Password: P@sswordl23!

* Accept imported certificate: True

When the administrator attempts to configure the depot, the following error message is presented:

Either the depot URL provided is partial or invalid or not reachable or download token is invalid. Check logs for more details.

The administrator completes the following troubleshooting steps:

* Confirms the Repository URL is valid by connecting to it through a web browser.

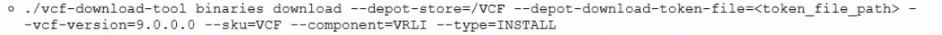

* Reviews the command used to create the depot:

* Confirms that the downloaded folder and files were copied into the /depot shared folder on the web server hosting the repository

Which two actions must the administrator take to resolve the issue? (Choose two.)

Answer : A, E

To resolve the 'partial or invalid or not reachable' error when configuring the VCF 9.0 Offline Depot, the administrator must address two critical misconfigurations related to the protocol and the file path mapping:

Switch to HTTPS (Option E): VMware Cloud Foundation 9.0 enforces HTTPS by default for all depot connections to ensure security. The administrator's configuration uses http://, which the VCF Fleet Manager will reject (or fail to connect to) unless the system has been explicitly modified via internal properties files to allow insecure transport. Changing the Repository URL to https://10.138.148.160/depot/ aligns with the default security requirements of the VCF 9.0 binaries download and validation process.

Reconfigure Web Server Pathing (Option A): The command --depot-store=/VCF instructs the download tool to create a repository structure rooted at /VCF. The administrator then copied this 'downloaded folder' into the /depot folder on the web server, resulting in a nested path (e.g., /var/www/html/depot/VCF/...). However, the configured URL is .../depot/, which points to the parent directory where the required index.json or metadata files are not immediately visible. The administrator must reconfigure the web server (e.g., via DocumentRoot or Alias settings) to explicitly share the specific /vcf/ (or /VCF/) folder content at the target URL so the Fleet Manager can locate the manifest files.

An administrator has been tasked with expanding an existing VMware Cloud Foundation (VCF) workload domain by adding a new cluster. The VCF fleet has the following configuration:

* Three workload domains, including the management domain are configured.

* The management domain (WLD-01) and one of the workload domains (WLD-02) are running VCF 9.0.

* The other workload domain (WLD-03) is running VCF 5.2.1 and is an isolated workload domain.

When attempting to perform the required steps using the vSphere Client UI the cluster cannot be added to the WLD-02 workload domain. What step should the administrator perform to complete the workload domain expansion?

Answer : D

VMware Cloud Foundation 9.0 introduces a major architectural redesign that replaces the traditional SDDC Manager--centric domain management model with a unified Fleet Management architecture implemented through VCF Operations Fleet Manager. In this model, each Workload Domain operates with its own vCenter, but Enhanced Linked Mode (ELM) is removed to improve isolation, reduce blast radius, and support multi-site scalability. As a result, administrators logged into the vSphere Client of the Management Domain can no longer manage or expand clusters in other Workload Domains, which explains why the vSphere UI blocks the attempted expansion of WLD-02.

Fleet Manager becomes the new authoritative control plane for lifecycle, topology, host commissioning, and workload domain expansion. Only Fleet Manager maintains the full global view necessary to orchestrate cluster addition operations across distributed vCenters and domains. Because WLD-02 is running VCF 9.0 and is fully fleet-aware, its expansion must occur through VCF Operations Fleet Manager, not through the vSphere Client or legacy SDDC Manager workflows.

Options involving WLD-03 are invalid since that domain is running VCF 5.2.1, is isolated, and cannot participate in fleet-aware operations. SDDC Manager (A) is no longer the correct interface for VCF 9.0 domain expansion operations.

An administrator is creating a new workload domain from VMware Cloud Foundation (VCF) Operations. They are blocked at the Hosts selection screen as no ESX hosts are available. They see the following message:

"No suitable hosts available to create a VI workload domain. Hosts must be unassigned, commissioned with at least one physical NIC and the same storage type as the VI workload domain, and the ESX version must be compatible with the lowest ESX version present in the management domain."

How can the administrator commission new hosts to enable the creation of the VI workload domain?

Answer : D

In VMware Cloud Foundation 9.0, all host commissioning operations are performed through VCF Operations, not through vSphere Client, Cloud Builder, or the VCF Installer. Once VCF is deployed, Cloud Builder is no longer used, and the VCF Installer is for lifecycle and bundle management---not for host workflows. The vSphere Client also cannot commission hosts because host commissioning is a foundational VCF workflow requiring hardware validation, storage type checks, NIC checks, HCL conformance, and version compatibility.

The error message provided:

''Hosts must be unassigned, commissioned with at least one physical NIC and the same storage type... and the ESX version must be compatible...''

is a standard VCF 9.0 validation message shown when no commissioned hosts matching the workload domain requirements exist. VMware documentation states that hosts must be commissioned under:

VCF Operations Fleet Management Hosts Commission Host

Here, VCF validates:

Storage type (vSAN ESA, vSAN OSA, NFS, FC, etc.)

Network pool membership (matching the WLD plan)

ESXi version compatibility with the Management Domain baseline

NIC mapping and certifications

Until hosts are commissioned, they cannot appear in the workload domain creation wizard.

Thus, the correct method to commission hosts is D. Using VCF Operations.

An administrator is troubleshooting network connectivity issues on a VMware ESX host configured with a dedicated VMware vSAN vSphere Distributed Switch (vDS) port group. The VMware vSAN vDS port group has two physical adapters and two uplinks assigned. After a failure of the active physical adapter, the vSAN vDS connection over the vSAN network was lost.

What is the cause of the issue?

Answer : C

In vSAN ESA or OSA networking configured through a dedicated vSphere Distributed Switch (vDS), each vSAN vmkernel port must have at least one Active physical uplink available at all times. The scenario describes a vDS with two physical adapters and two uplinks, but after failure of the active uplink, vSAN traffic was lost. This only occurs when the second physical NIC is not actually assigned to the vSAN port group---typically because its uplink is set to ''Unused''.

In such a misconfiguration:

vSAN traffic only uses the single active uplink.

When that uplink fails, vSAN has no failover path, causing immediate connectivity loss.

Option A (storage policies) does not affect network uplink behavior. Option B (VLAN tagging) could cause connectivity failure but would not suddenly break only after an uplink failure. Option D (failover policy not allowing fallback) affects recovery order, not immediate redundancy.