VMware Advanced VMware Cloud Foundation 9.0 vSphere Kubernetes Service 3V0-24.25 Exam Questions

What is the purpose of a ReplicaSet in the VMware vSphere Kubernetes Service (VKS)?

Answer : C

A ReplicaSet is a core Kubernetes workload controller used in VKS clusters to maintainavailability and steady-state capacityfor stateless applications. Its primary purpose is to ensure that adesired number of identical pod replicasare running continuously. If a pod is deleted, crashes, or is evicted because a node fails, the ReplicaSet detects that the current number of matching pods has dropped below the target and immediately creates replacement pods to restore the requested replica count. Conversely, if too many matching pods exist (for example, due to manual creation or a transient surge), it scales down by deleting excess pods to return to the desired state.

This behavior makes ReplicaSets foundational to reliable, self-healing application operation in Kubernetes and therefore in VKS. In practice, administrators and DevOps teams usually interact with ReplicaSets indirectly through higher-level controllers likeDeployments, which manage rolling updates and revisions while using ReplicaSets underneath to enforce the replica count for each version of an application. Options A, B, and D map to other Kubernetes objects (Service, StatefulSet, and DaemonSet respectively), not ReplicaSet.

What is the purpose of a network policy in a Kubernetes cluster?

Answer : B

In VCF 9.0 VKS clusters, network policy is a core Kubernetes networking control implemented by the cluster's CNI (Antrea or Calico). The VCF documentation's ''VKS Cluster Networking'' table describesNetwork policyas the feature that ''controls what traffic is allowed to and from selected pods and network endpoints,'' and identifies Antrea or Calico as the providers for this capability. That definition precisely matches optionB: it governs pod-to-pod and pod-to-external endpoint communication rules. This is different from ingress routing (which the same table describes separately as ''Cluster ingress ... routing for inbound pod traffic''), so option C is not correct for ''network policy.'' It is also different from NodePort behavior (external access via a port on each worker node through the Kubernetes network proxy), which is explicitly listed as ''Service type: NodePort.'' Finally, creating/operating clusters natively in Supervisor is a broader lifecycle function (Cluster API/VKS API), not the definition of network policy. Therefore,NetworkPolicyis the Kubernetes-layer mechanism to define and enforce allowed traffic flows.

A VKS administrator is tasked to leverage day-2 controls to monitor, scale, and optimize Kubernetes clusters across multiple operating systems and workload characteristics.

What two steps should the administrator take? (Choose two.)

Answer : A, C

VCF 9.0 describes a vSphere Namespace as the control point where administrators defineresource boundariesfor workloads, explicitly stating that vSphere administrators can create namespaces and ''configure them with specified amount ofmemory, CPU, and storage,'' and that you can ''set limits forCPU, memory, storage'' for a namespace. This directly supports stepAas a day-2 control to keep multi-tenant clusters governed and prevent resource contention across different teams and workload types.

For monitoring and optimization, VCF 9.0 explains that day-2 operations include visibility into utilization and operational metrics for VKS clusters, noting that application teams can use day-2 actions and gain insights intoCPU and memory utilizationand advanced metrics (including contention and availability) for VKS clusters. In addition, VCF 9.0 monitoring guidance for VKS clusters states thatTelegraf and Prometheusmust be installed and configured on each VKS cluster before metrics and object details are sent for monitoring, and that VCF Operations supports metrics collection for Kubernetes objects (namespaces, nodes, pods, containers) via Prometheus. Since the Prometheus stack commonly includes Grafana dashboards for visualization, deployingPrometheus + Grafanamatches the required monitoring/optimization outcome inC.

What Kubernetes component is responsible for workload creation?

Answer : D

In Kubernetes, the component that actuallycreates and runs workloads on a nodeis thekubelet. The kubelet is the node agent that ensures the containers described by PodSpecs are running on that node. VCF 9.0 maps this concept directly into vSphere Supervisor by describingSphereletas ''a kubelet that is ported natively to ESXi and allows the ESXi host to become part of the Kubernetes cluster,'' showing that kubelet functionality is responsible for running workloads on worker nodes (ESXi hosts in the Supervisor case).

The other options have different roles:etcdis the control plane data store,API Serveris the front-end for Kubernetes API operations, and theSchedulerdecides placement (which node should run a pod). VCF 9.0 even calls out that ''the Kubernetes scheduler... cannot place pods intelligently'' without visibility into vCenter inventory---reinforcing that scheduling is about placement decisions, not the act of creating/running the workload on the node.

So, while the scheduler selects where a pod should run, thekubeletis the component responsible for actually instantiating and maintaining the workload on the target node.

What are three resource limitations defined on a vSphere Namespace? (Choose three.)

Answer : C, D, E

In VCF 9.0 Workload Management, avSphere Namespaceis the construct that ''sets the resource boundaries'' for workloads running on a Supervisor, includingCPU, memory, and storage. The documentation explicitly states that a vSphere Namespace ''sets the resource boundaries forCPU, memory, storage, and also the number of Kubernetes objects that can run within the namespace.'' In the operational procedure ''Set Resource Limits to a vSphere Namespace,'' VMware further lists the configurable limits as:CPU(''set a limit to the CPU consumption''),Memory(''set a limit to the memory consumption''), andStorage(''set a limit on the storage consumption... per storage policy that is used'').

By contrast,Containersare not a namespace ''resource limit'' category; VMware documents ''Container Defaults'' separately (defaults for container CPU/memory requests and limits) rather than a top-level resource limit type. Similarly,Servicesare governed under ''Object Limits'' (how many Kubernetes objects like Services can exist), which is distinct from resource limits. Therefore, the three resource limitations defined on a vSphere Namespace areCPU, Memory, and Storage.

An administrator is maintaining several Kubernetes clusters deployed through a Supervisor Namespace in a vSphere Kubernetes Service environment. One of the micro-services (a containerized API gateway) is failing intermittently after a recent configuration update. The pod is entering aCrashLoopBackOffstate. The administrator needs to collect detailed runtime information directly from the pod, including both thestandard output (STDOUT)andstandard error (STDERR)streams, to analyze the application's behavior before the crash.

Which command produces the required output?

Answer : D

When a container repeatedly crashes (CrashLoopBackOff), the most direct way to capture what the application emitted right before termination is to retrieve the container logs. In Kubernetes, application output written toSTDOUTandSTDERRis captured by the container runtime logging mechanism and exposed through the Kubernetes API for retrieval. The kubectl logs command is designed specifically for this purpose: it fetches the log stream for a pod (and container, if multiple exist), allowing administrators to review the runtime messages that typically explain configuration errors, missing dependencies, failed probes, authentication problems, or other causes of the crash loop. This aligns with VMware operational guidance that uses kubectl to retrieve pod-level operational information and logs as part of troubleshooting Kubernetes functionality running on vSphere.

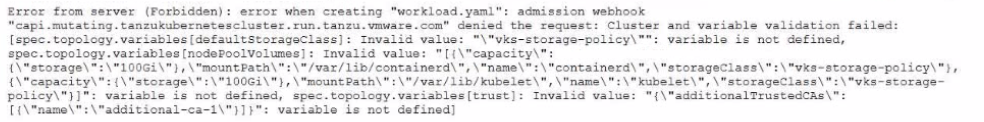

An administrator is upgrading to VKS 3.4 and encounters the following error during cluster creation using workload, yami:

How should the administrator resolve this issue to successfully complete the upgrade"?

Answer : B

The error shows an admission webhook denial wherevariable validation failedand multiple entries under spec.topology.variables3...] are reported as''variable is not defined''. That message indicates the manifest is supplying variables that arenot part of the current Cluster API / topology schemaenforced by the Supervisor during cluster creation. In VKS, cluster provisioning isdeclarative: you invoke the VKS API withkubectl + a YAML file, and ''after the cluster is created, you update the YAML to update the cluster.'' When the API/schema changes between releases, older manifests can contain fields/variables that are no longer recognized, and the admission webhook blocks them to prevent creating an invalid cluster spec.

This aligns with VMware's broader direction that the olderTanzuKubernetesCluster (TKC) API was deprecatedand customers are encouraged to useCluster APIfor bootstrap/config/lifecycle management. In practice, to complete the upgrade/creation successfully, you must update the cluster manifest to match the supported schema:remove the deprecated/unknown topology variablesshown in the error (for example, the undefined storage-policy and trust variables) and re-apply the correctedworkload.yaml.